Chapter 1. Statistics

Introduction

Let’s say you do an experiment, measure something, or make an observation. To be sure that your result is repeatable, you conduct the experiment several times, or you measure the same thing in different individuals, or you make your observation at several different time points during the experiment. Whatever your experiment, measurement, or observation, the resulting numbers are somewhat scattered. They scatter because conditions may differ in some subtle way from one experiment to the next, one individual differs from another, or situations may change slightly through time. The scatter of numbers is known as a distribution.

To take a concrete example, suppose you were interested in the adult height of the population of men or women in some city. While you are interested in the population of men or women as a whole, in practice you are unlikely to be able to measure the height of every adult man or woman. Instead you take a sample of men or women, ideally chosen at random without regard to height, and measure the height of each individual in the sample. Your sample yields a distribution of heights, and your intention is to make inferences about the entire population based on what you see in the sample.

By itself, collecting the data on height doesn’t tell you much. To go further, you need to carry out some sort of analysis. Together, collecting data from a sample of a population and analyzing these data to make inferences about the population as a whole constitutes the field of statistics.

The Normal Distribution

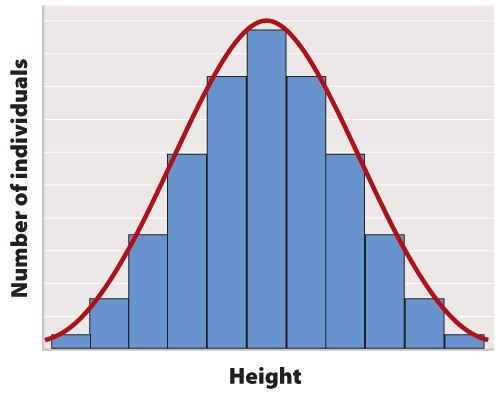

The first step in statistical analysis of data is usually to prepare some visual representation. In the case of height, this is easily done by grouping nearby heights together and plotting the result as a histogram like that shown in Figure 1. The smooth, bell-shaped curve approximating the histogram in Figure 1A is called the normal distribution. If you measured the height of more and more individuals, then you could make the width of each bar in the histogram narrower and narrower, and the shape of the histogram would gradually get closer and closer to the normal distribution.

The normal distribution does not arise by accident but is a consequence of a fundamental principle of statistics which states that when many independent factors act together to determine the magnitude of a trait, the resulting distribution of the trait is normal. Human height is one such trait because it results from the cumulative effect of many different genetic factors as well as environmental effects such as diet and exercise. The cumulative effect of the many independent factors affecting height results in a normal distribution.

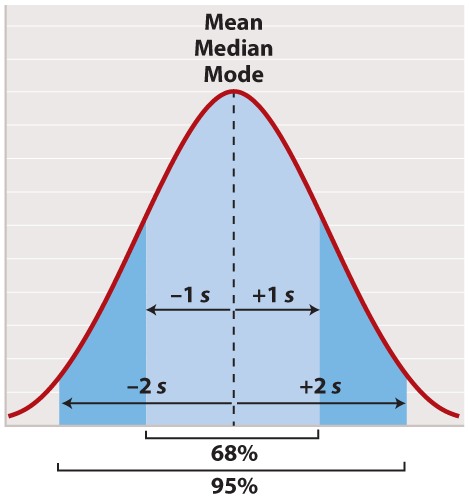

The normal distribution appears in countless applications in biology. Its shape is completely determined by two quantities. One is the mean, which tells you the location of the peak of the distribution along the x-axis (Figure 2). While we do not know the mean of the population as a whole, we do know the mean of the sample, which is equal to the arithmetic average of all the measurements—the value of all of the measurements added together and divided by the number of measurements.

In symbols, suppose we sample n individuals and let xi be the value of the ith measurement, where i can take on the values 1, 2, ..., n. Then the mean of the sample (often symbolized ) is given by

, where the symbol

means “sum” and

means x1 + x2 + ... + xn.

For a normal distribution, the mean coincides with another quantity called the median. The median is the value along the x-axis that divides the distribution exactly in two—half the measurements are smaller than the median, and half are larger than the median. The mean of a normal distribution coincides with yet another quantity called the mode. The mode is the value most frequently observed among all the measurements.

The second quantity that characterizes a normal distribution is its standard deviation (“s” in Figure 2), which measures the extent to which most of the measurements are clustered near the mean. A smaller standard deviation means a tighter clustering of the measurements around the mean. The true standard deviation of the entire population is unknown, but we can estimate it from the sample as

What this complicated-looking formula means is that we calculate the difference between each individual measurement and the mean, square the difference, add these squares across the entire sample, divide by n - 1, and take the square root of the result. The division by n - 1 (rather than n) may seem mysterious; however, it has the intuitive explanation that it prevents anyone from trying to estimate a standard deviation based on a single measurement (because in that case n - 1 = 0).

In a normal distribution, approximately 68% of the observations lie within one standard deviation on either side of the mean (Figure 2, light blue), and approximately 95% of the observations lie within two standard deviations on either side of the mean (Figure 2, light and darker blue together). You may recall political polls of likely voters that refer to the margin of error; this is the term that pollsters use for two times the standard deviation. It is the margin within which the pollster can state with 95% confidence the true percentage of likely voters favoring each candidate at the time the poll was conducted.

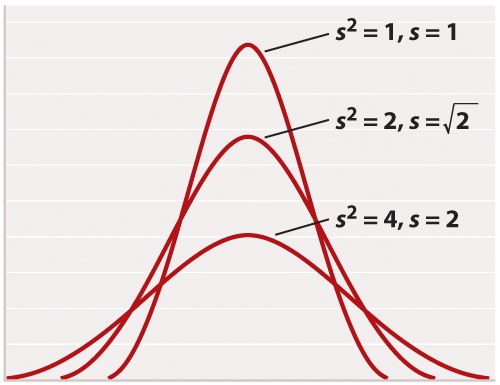

For reasons rooted in the history of statistics, the standard deviation is often stated in terms of s2 rather than s. The square of the standard deviation is called the variance of the distribution. Both the standard deviation and the variance are measures of how closely most data points are clustered around the mean. Not only is the standard deviation more easily interpreted than the variance (Figure 2), but also it is more intuitive in that standard deviation is expressed in the same units as the mean (for example, in the case of height, inches), whereas the variance is expressed in the square of the units (for example, inches2). On the other hand, the variance is the measure of dispersal around the mean that more often arises in statistical theory and the derivation of formulas. Figure 3 shows how increasing variance of a normal distribution corresponds to greater variation of individual values from the mean. Since all of the distributions in Figure 3 are normal, 68% of the values lie within one standard deviation of the mean, and 95% within two standard deviations of the mean.

Another measure of how much the numerical values in a sample are scattered is the range. As its name implies, the range is the difference between the largest and the smallest values in the sample. The range is a less widely used measure of scatter than the standard deviation.

Other Types of Distributions

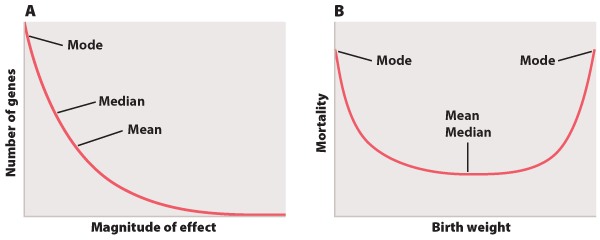

Not all measurements follow the normal distribution. There are a wide variety of alternatives, but Figure 4 depicts two that are frequently encountered.

The distribution in Figure 4A shows the magnitude of individual gene effects on a trait like height affected by many genes. Most of the genes affecting the trait have small effects, but the distribution also features a long tail on the right consisting of a small number of genes with relatively large effects. Because of its long right-hand tail, the distribution is said to be skewed to the right. The mean, median, and mode of the distribution in Figure 4A are all different from each other. The mode of a distribution is the “most popular” value where the distribution peaks, the median is the “middle” value that divides the distribution into two equal halves, and the mean is the arithmetic average of the values.

The example in Figure 4B depicts mortality of newborns as a function of birth weight. The minimum of the distribution is in the middle, which means that babies of intermediate birth weight have the greatest chance of survival, with decreasing chances of survival on either side. In this example, the mean and the median are the same, but the mode differs. This example actually has two modes, one at each extreme, which means it is a bimodal distribution.

The distributions in Figures 4A and 4B have standard deviations too, and again they measure the scatter of most of the observations around the mean. In these cases, however, the proportion of observations within ± 1 and ± 2 of the mean differs from the 68% and 95% that characterize the normal distribution (Figure 2).

Correlation and Regression

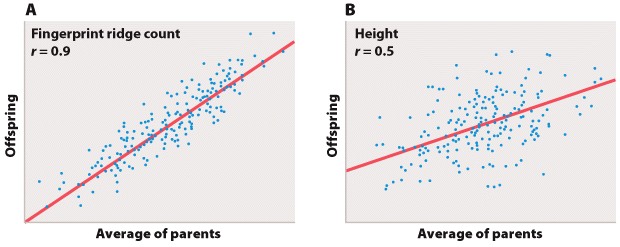

Biologists often are also interested in the relation between two different measurements, such as height and weight or number of species on an island versus the size of the island. Such data are often depicted as a scatter plot (Figure 5), in which the magnitude of one variable is plotted along the x-axis and the other along the y-axis, each point representing one paired observation.

Figure 5A is the sort of data that would correspond to fingerprint ridge count (the number of raised skin ridges lying between two reference points in each fingerprint). While the data show some scatter, the overall trend is evident. There is a very strong association between the average fingerprint ridge count of parents and that of their offspring. The strength of association between two variables can be measured by the correlation coefficient, which theoretically ranges between +1 and –1. A correlation coefficient of +1 means a perfect positive relation (as one variable increases, the other increases proportionally), and a correlation coefficient of –1 implies a perfect negative relation (as one variable increases, the other decreases proportionally). Correlation coefficients of +1 or –1 are rarely observed in real data. In the case of fingerprint ridge count, the correlation coefficient is 0.9, which implies that the average fingerprint ridge count of offspring is almost (but not quite) equal to that of the parents. For a complex trait, this is a remarkably strong correlation.

Figure 5B represents data that would correspond to adult height. The data exhibit greater scatter than in Figure 5A; however, there is still a fairly strong resemblance between parents and offspring. The correlation coefficient in this case is 0.5. This value means that, on average, the offspring height is approximately halfway between that of the average of the parents and the average of the population as a whole.

The illustrations in Figure 5A and 5B also emphasize one limitation of the correlation coefficient. The correlation coefficient measures the strength of a straight-line (linear) relation. A nonlinear relation (one curving upward or downward) between two variables could be quite strong, but the data might still show a weak correlation.

Each of the straight lines in Figure 5 is a regression line or, more precisely, a regression line of y on x. Each line depicts how, on average, the variable y changes as a function of the variable x across the whole set of data. The slope of the line tells you how many units y changes, on average, for a unit change in x. A slope of +1 implies that a one-unit change in x results in a one-unit change in y, and a slope of 0 implies that the value of x has no effect on the value of y. The slope of a straight line relating values of y to those of x is known as the regression coefficient.

Covariance

Both the correlation coefficient and the regression coefficient are related to a value called the covariance between the variables x and y. The covariance is a statistical quantity representing the extent to which the values of x and y change together. For a sample of n pairs of values (xi, yi), where i takes on the values 1, 2, ..., n, the covariance (cov) between x and y is estimated using the equation

This means that, for every pair of values (xi, yi), we multiply the difference between each value and its mean, add the products across all samples, and then divide by n – 1. Again, the n – 1 has the effect of emphasizing that one cannot estimate the covariance between two variables by examining a single (x, y) pair.

If the deviation of an x value from its mean tends to have the same sign (positive or negative) as the corresponding deviation of the y value from its mean, then the covariance between the variables is positive. And if the deviation of an x value from its mean tends to have the opposite sign from the corresponding deviation of the y value from its mean, then the covariance between the variables is negative.

The correlation coefficient (r) between two variables expresses the covariance in terms of the product of the standard deviations, namely

where sx and sy are the standard deviations of x and y. Dividing the covariance by the product of the standard deviations removes the units of measurement and limits the range of the correlation coefficient from -1 to +1.

On the other hand, the slope of the regression line of y on x is given by a quantity often symbolized as b, calculated from a sample as

In the special case when sx= sy = 1 (as they are in Figure 5), the correlation coefficient and the regression coefficient are identical and both equal cov(x, y).

Statistical Significance

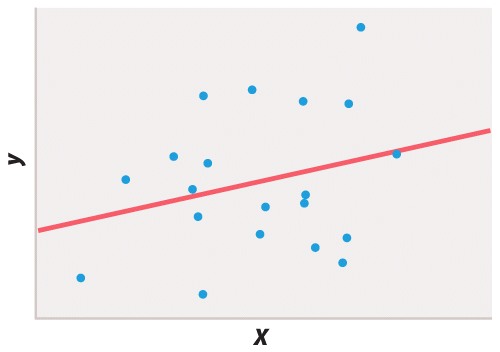

Biologists observe many relations between variables that are either due to chance in the sample that happened to be chosen or that are too weak to be biologically important. To distinguish the accidental or weak relations from the real and strong ones, a statistical test of the relation is carried out. A statistical test must be based on some specific hypothesis. For example, to determine whether an observed correlation coefficient could be due to chance, we might carry out a statistical test of the hypothesis that the true correlation coefficient is 0. A statistical test usually yields a single number, usually called the P-value (or sometimes p-value), that expresses the likelihood that an observed result (such as a correlation coefficient) could have been observed merely by chance. A P-value is a probability, and if P ≤ 0.05 the observed results are conventionally regarded as unlikely to be attributed to chance alone. In that case, the observed relation is likely to be genuine. In other words, if an observed relation would be obtained by chance alone in only 1 in 20 or fewer experiments (P ≤ 0.05), then the observed relation is regarded as likely to be true. A finding of P ≤ 0.01 is taken as even stronger evidence that the observed result is unlikely to be due to chance.

Statistical testing is necessary because different researchers may disagree on whether or not a finding supports a particular hypothesis or if the interpretation of a result could be affected by wishful thinking. Take Figure 6, for example. If you wish to believe that there was a functional relation between x and y, you might easily convince yourself that the 20 data points fit the straight line. But in fact the P value of the regression coefficient is about P = 0.25, which means that about 25% of the time you would get a line that fits the data as well or better than the line you observed, purely by chance. The proper conclusion is that these data give no support for the hypothesis of a functional relation between x and y. If there is such a relation, then it is too weak to show up in a sample of only 20 pairs of points.

There is good reason to be cautious even when a result is statistically significant. Bear in mind that 5% of statistical tests are misleading in that they indicate that some result is significant merely as a matter of chance. For example, over any short period of time, about 5% of companies listed in stock exchanges will have changes in the dollar value of their shares that are significantly correlated with changes in the number of sunspots, even though the correlation is certainly spurious and due to chance alone. Critical thinking therefore requires that one maintain some skepticism even when faced with statistically significant results published in peer-reviewed scientific journals. Scientific proof rarely hinges on the result of a single experiment, measurement, or observation. It is the accumulation of evidence from many independent sources, all pointing in the same direction, that lends increasing credence to a scientific hypothesis until eventually it becomes a theory.