Operant Conditioning

6-5 What is operant conditioning, and how is operant behavior reinforced and shaped?

It’s one thing to classically condition a dog to drool at the sound of a tone, or a child to fear moving cars. To teach an elephant to walk on its hind legs or a child to say please, we must turn to another type of learning—operant conditioning.

It’s one thing to classically condition a dog to drool at the sound of a tone, or a child to fear moving cars. To teach an elephant to walk on its hind legs or a child to say please, we must turn to another type of learning—operant conditioning.

Classical conditioning and operant conditioning are both forms of associative learning, yet their difference is straightforward:

- In classical conditioning, an animal (dog, child, sea slug) forms associations between two events it does not control. Classical conditioning involves respondent behavior—automatic responses to a stimulus (such as salivating in response to meat powder and later in response to a tone).

- In operant conditioning, animals associate their own actions with consequences. Actions followed by a rewarding event increase; those followed by a punishing event decrease. Behavior that operates on the environment to produce rewarding or punishing events is called operant behavior.

We can therefore distinguish our classical from our operant conditioning by asking two questions. Are we learning associations between events we do not control (classical conditioning)? Or are we learning associations between our behavior and resulting events (operant conditioning)?

RETRIEVE + REMEMBER

Question 6.8

With _______ conditioning, we learn associations between events we do not control. With ________ conditioning, we learn associations between our behavior and resulting events.

With _______ conditioning, we learn associations between events we do not control. With ________ conditioning, we learn associations between our behavior and resulting events.

classical; operant

Skinner’s Experiments

B. F. Skinner (1904–1990) was a college English major who had set his sights on becoming a writer. Then, seeking a new direction, he became a graduate student in psychology, and, eventually, modern behaviorism’s most influential and controversial figure.

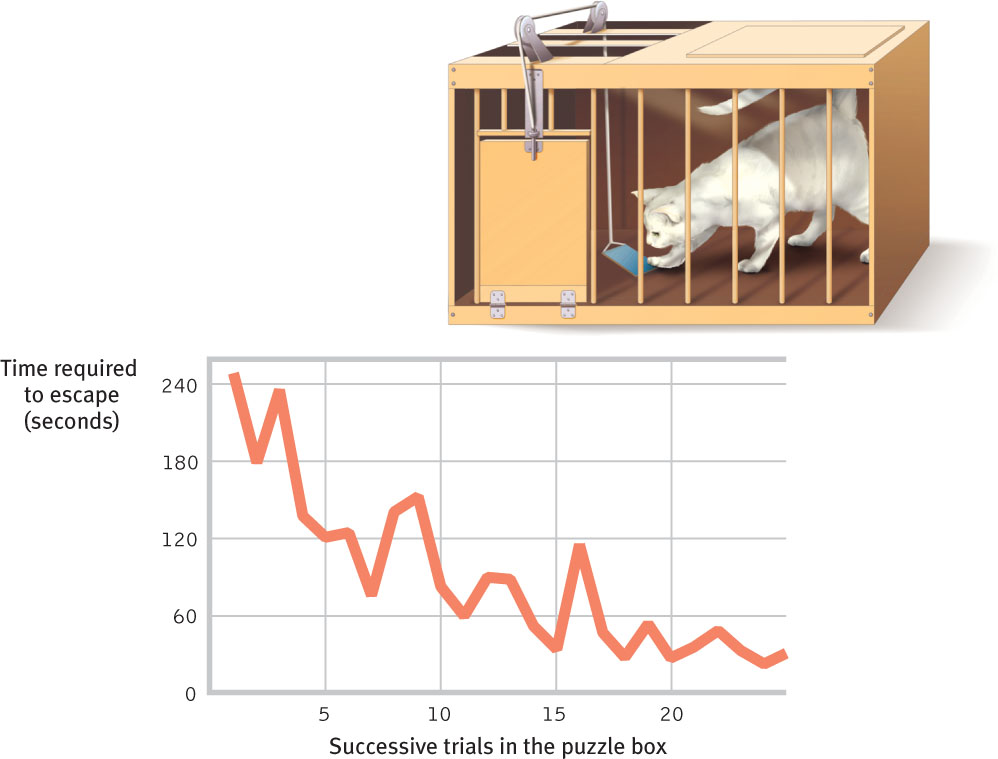

Skinner’s work built on a principle that psychologist Edward L. Thorndike (1874–1949) called the law of effect: Rewarded behavior is likely to be repeated (FIGURE 6.6). From this starting point, Skinner went on to develop experiments that would reveal principles of behavior control. Using these principles, he taught pigeons to walk a figure 8, play Ping-Pong®, and keep a missile on course by pecking at a screen target.

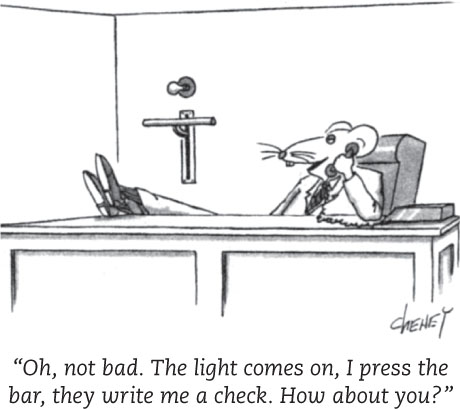

For his studies, Skinner designed an operant chamber, popularly known as a Skinner box (FIGURE 6.7). The box has a bar or button that an animal presses or pecks to release a food or water reward. It also has a device that records these responses. This design creates a stage on which rats and other animals act out Skinner’s concept of reinforcement: any event that strengthens (increases the frequency of) a preceding response. What is reinforcing depends on the animal and the conditions. For people, it may be praise, attention, or a paycheck. For hungry and thirsty rats, food and water work well. Skinner’s experiments have done far more than teach us how to pull habits out of a rat. They have explored the precise conditions that foster efficient and enduring learning.

Shaping Behavior

Imagine that you wanted to condition a hungry rat to press a bar. Like Skinner, you could tease out this action with shaping, gradually guiding the rat’s actions toward the desired behavior. First, you would watch how the animal naturally behaves, so that you could build on its existing behaviors. You might give the rat a bit of food each time it approaches the bar. Once the rat is approaching regularly, you would give the treat only when it moves close to the bar, then closer still. Finally, you would require it to touch the bar to get food. With this method of successive approximations, you reward responses that are ever-closer to the final desired behavior. By giving rewards only for desired behaviors and ignoring all other responses, researchers and animal trainers gradually shape complex behaviors.

Shaping can also help us understand what nonverbal organisms perceive. Can a dog see red and green? Can a baby hear the difference between lower- and higher-pitched tones? If we can shape them to respond to one stimulus and not to another, then we know they can perceive the difference. Such experiments have even shown that some animals can form concepts. When experimenters reinforced pigeons for pecking after seeing a human face, but not after seeing other images, the pigeons learned to recognize human faces (Herrnstein & Loveland, 1964). After being trained to discriminate among classes of events or objects—flowers, people, cars, chairs—pigeons were usually able to identify the category in which a new pictured object belonged (Bhatt et al., 1988; Wasserman, 1993).

In everyday life, we continually reinforce and shape others’ behavior, said Skinner, though we may not mean to do so. Isaac’s whining, for example, annoys his dad, but look how he responds:

Isaac: Could you take me to the mall?

Dad: (Ignores Isaac and stays focused on his phone.)

Isaac: Dad, I need to go to the mall.

Dad: (distracted) Uh, yeah, just a minute.

Isaac: DAAAD! The mall!!

Dad: Show me some manners! Okay, where are my keys…

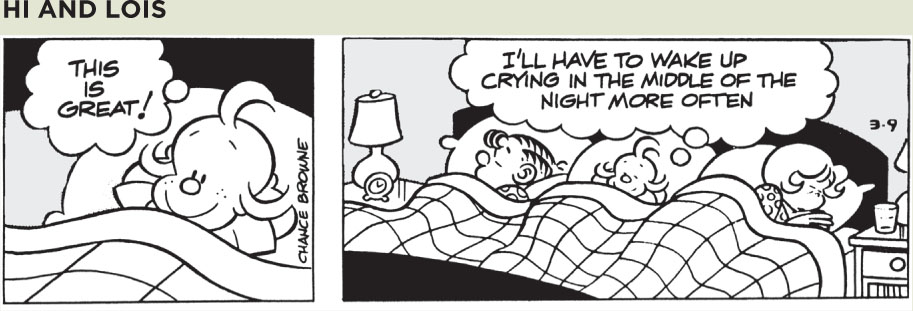

Isaac’s whining is reinforced, because he gets something desirable—his dad’s attention. Dad’s response is reinforced because it ends something aversive (unpleasant)—Isaac’s whining.

Or consider a teacher who sticks gold stars on a wall chart beside the names of children scoring 100 percent on spelling tests. As everyone can then see, some children always score 100 percent. The others, who take the same test and may have worked harder than the academic all-stars, get no stars. Using operant conditioning principles, what advice could you offer the teacher to help all students do their best work?1

Types of Reinforcers

6-6 How do positive and negative reinforcement differ, and what are the basic types of reinforcers?

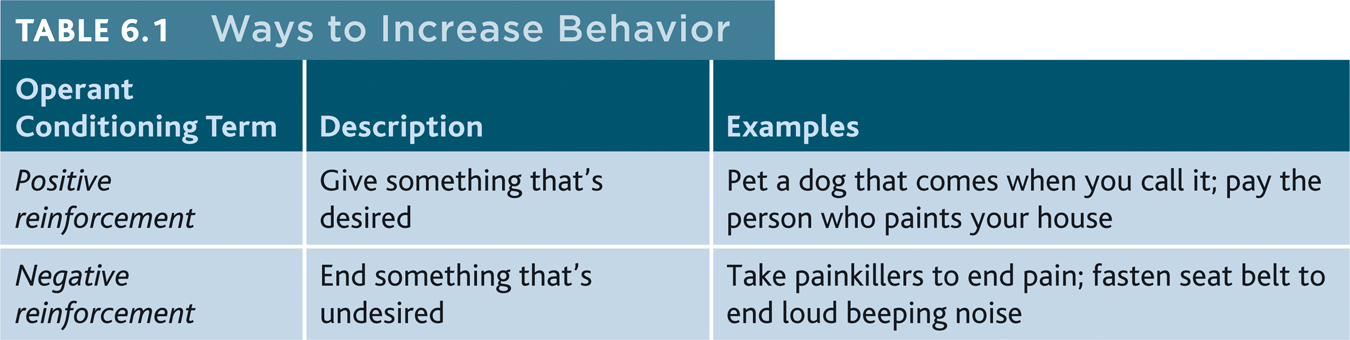

Up to now, we’ve mainly been discussing positive reinforcement, which strengthens a response by presenting a typically pleasurable stimulus after a response. But, as we saw in the whining Isaac story, there are two basic kinds of reinforcement (TABLE 6.1). Negative reinforcement strengthens a response by reducing or removing something undesirable or unpleasant. Isaac’s whining was positively reinforced, because Isaac got something desirable—his father’s attention. His dad’s response to the whining (doing what Isaac wanted) was negatively reinforced, because it got rid of Isaac’s annoying whining. Similarly, taking aspirin may relieve your headache and hitting snooze will silence your annoying alarm. These welcome results provide negative reinforcement and increase the odds that you will repeat these behaviors. For drug addicts, the negative reinforcement of ending withdrawal pangs can be a compelling reason to resume using (Baker et al., 2004).

Note that negative reinforcement is not punishment. (Some friendly advice: Repeat the last five words in your mind.) Rather, negative reinforcement removes a punishing event. Think of negative reinforcement as something that provides relief—from that whining teenager, bad headache, or annoying alarm. Whether it works by getting rid of something we don’t enjoy, or by giving us something we do enjoy, reinforcement is any consequence that strengthens behavior.

PRIMARY AND CONDITIONED REINFORCERS Getting food when hungry or having a painful headache go away is innately (naturally) satisfying. These primary reinforcers are unlearned. Conditioned reinforcers, also called secondary reinforcers, get their power through learned associations with primary reinforcers. If a rat in a Skinner box learns that a light reliably signals a food delivery, the rat will work to turn on the light. The light has become a secondary reinforcer linked with food. Our lives are filled with conditioned reinforcers—money, good grades, a pleasant tone of voice—each of which has been linked with a more basic reward—food and shelter, safety, social support.

IMMEDIATE AND DELAYED REINFORCERS In shaping experiments, rats are conditioned with immediate rewards. You want the rat to press the bar, it sniffs the bar, and it immediately gets a food pellet. If a distraction delays your giving the rat its prize, the rat won’t learn to link the bar sniffing with the food pellet reward.

Unlike rats, humans do respond to delayed reinforcers. We associate the paycheck at the end of the week, the good grade at the end of the semester, the trophy at the end of the season with our earlier actions. Indeed, learning to control our impulses in order to achieve more valued rewards is a big step toward maturity (Logue, 1998a,b). Chapter 3 described a famous finding in which children did curb their impulses and delay gratification, choosing two marshmallows later over one now. Those same children achieved greater educational and vocational success later in life (Mischel et al., 1988, 1989).

Sometimes, however, small but immediate pleasures (the enjoyment of watching late-night TV, for example) blind us to big but delayed consequences (feeling alert tomorrow). For many teens, the immediate gratification of impulsive, unprotected sex wins over the delayed gratification of safe sex or saved sex (Loewenstein & Furstenberg, 1991). And for too many of us, the immediate rewards of today’s gas-guzzling vehicles, air travel, and air conditioning win over the bigger future consequences of climate change, rising seas, and extreme weather.

Reinforcement Schedules

6-7 How do continuous and partial reinforcement schedules affect behavior?

In most of our examples, the desired response has been reinforced every time it occurs. This is continuous reinforcement. But reinforcement schedules vary, and they influence our learning. Continuous reinforcement is a good choice for mastering a behavior because learning occurs rapidly. But there’s a catch: Extinction also occurs rapidly. When reinforcement stops—when we stop delivering food after the rat presses the bar—the behavior soon stops. If a normally dependable candy machine fails to deliver a chocolate bar twice in a row, we stop putting money into it (although a week later we may exhibit spontaneous recovery by trying again).

Real life rarely provides continuous reinforcement. Salespeople don’t make a sale with every pitch. But they persist because their efforts are occasionally rewarded. And that’s the good news about partial (intermittent) reinforcement schedules, in which responses are sometimes reinforced, sometimes not. Learning is slower than with continuous reinforcement, but resistance to extinction is greater. Imagine a pigeon that has learned to peck a key to obtain food. If you gradually phase out the food delivery until it occurs only rarely, in no predictable pattern, the pigeon may peck 150,000 times without a reward (Skinner, 1953). Slot machines reward gamblers in much the same way—occasionally and unpredictably. And like pigeons, slot players keep trying, again and again. With intermittent reinforcement, hope springs eternal.

Lesson for parents: Partial reinforcement also works with children. What happens when we occasionally give in to children’s tantrums for the sake of peace and quiet? We have intermittently reinforced the tantrums. This is the best way to make a behavior persist.

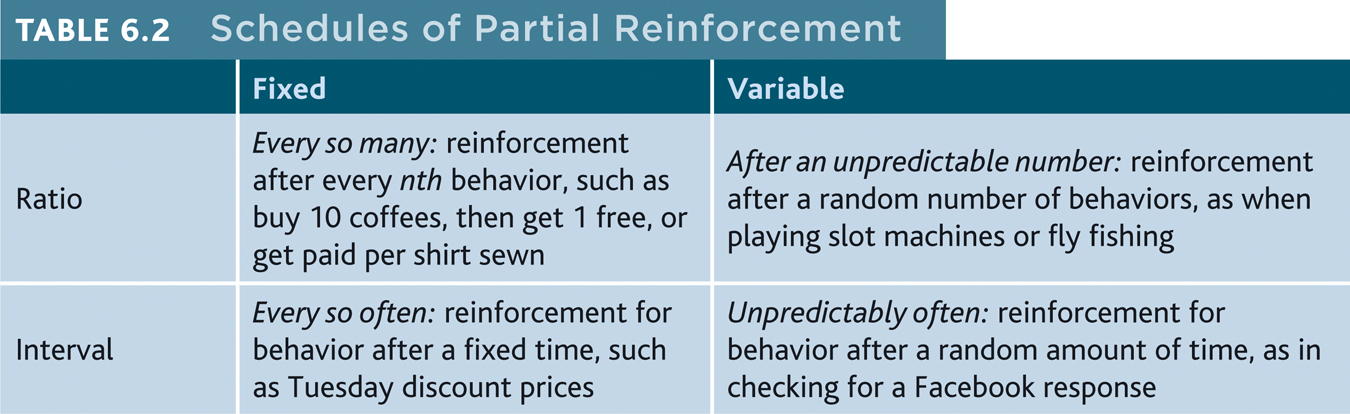

Skinner (1961) and his collaborators compared four schedules of partial reinforcement. Some are rigidly fixed, some unpredictably variable (TABLE 6.2).

Fixed-ratio schedules reinforce behavior after a set number of responses. Coffee shops may reward us with a free drink after every 10 purchased. In the laboratory, rats may be reinforced on a fixed ratio of, say, one food pellet for every 30 responses. Once conditioned, the rats will pause only briefly to munch on the pellet before returning to a high rate of responding.

Variable-ratio schedules provide reinforcers after an unpredictable number of responses. This is what slot-machine players and fly-casting anglers experience—unpredictable reinforcement. And it’s what makes gambling and fly fishing so hard to extinguish even when both are getting nothing for something. Because reinforcers increase as the number of responses increases, variable-ratio schedules produce high rates of responding.

Fixed-interval schedules reinforce the first response after a fixed time period. Pigeons on a fixed-interval schedule peck more rapidly as the time for reinforcement draws near. People waiting for an important letter check more often as delivery time approaches. A hungry cook peeks into the oven frequently to see if cookies are brown. This produces a choppy stop-start pattern rather than a steady rate of response.

Variable-interval schedules reinforce the first response after unpredictable time intervals. At varying times, longed-for responses finally reward persistence in rechecking Facebook or e-mail. And at unpredictable times, a food pellet rewarded Skinner’s pigeons for persistence in pecking a key. Variable-interval schedules tend to produce slow, steady responding. This makes sense, because there is no knowing when the waiting will be over.

In general, response rates are higher when reinforcement is linked to the number of responses (a ratio schedule) rather than to time (an interval schedule). But responding is more consistent when reinforcement is unpredictable (a variable schedule) than when it is predictable (a fixed schedule).

Animal behaviors differ, yet Skinner (1956) contended that the reinforcement principles of operant conditioning are universal. It matters little, he said, what response, what reinforcer, or what species you use. The effect of a given reinforcement schedule is pretty much the same: “Pigeon, rat, monkey, which is which? It doesn’t matter…. Behavior shows astonishingly similar properties.”

RETRIEVE + REMEMBER

Question 6.9

Telemarketers are reinforced by which schedule? People checking the oven to see if the cookies are done are on which schedule? Airline frequent flyer programs that offer a free flight after every 25,000 miles of travel are using which reinforcement schedule?

Telemarketers are reinforced by which schedule? People checking the oven to see if the cookies are done are on which schedule? Airline frequent flyer programs that offer a free flight after every 25,000 miles of travel are using which reinforcement schedule?

Telemarketers are reinforced on a variable-ratio schedule (after a varying number of calls). Cookie checkers are reinforced on a fixed-interval schedule. Frequent-flyer programs use a fixed-ratio schedule.

Punishment

6-8 How does punishment differ from negative reinforcement, and how does punishment affect behavior?

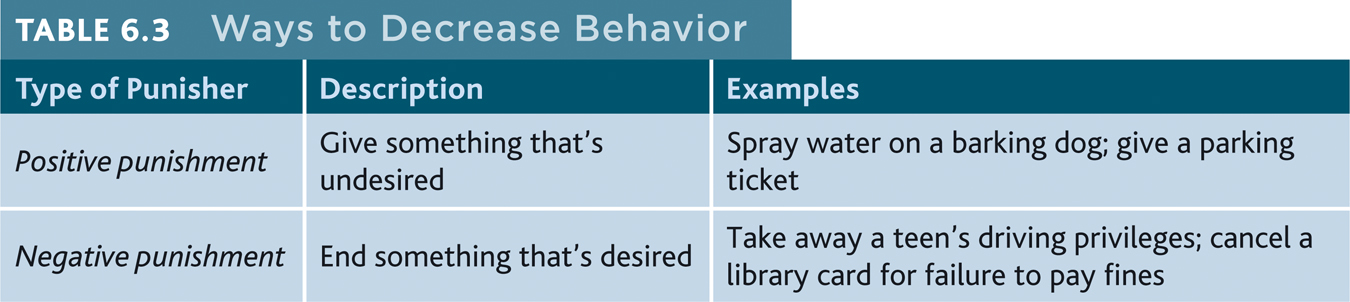

Reinforcement increases a behavior; punishment does the opposite. A punisher is any consequence that decreases the frequency of the behavior it follows (TABLE 6.3). Swift and sure punishers can powerfully restrain unwanted behaviors. The rat that is shocked after touching a forbidden object and the child who is burned by touching a hot stove will learn not to repeat those behaviors.

Criminal behavior, much of it impulsive, is also influenced more by swift and sure punishers than by the threat of severe sentences (Darley & Alter, 2011). Thus, when Arizona introduced an exceptionally harsh sentence for first-time drunk drivers, the drunk-driving rate changed very little. But when Kansas City police patrols started patrolling a high crime area to increase the sureness and swiftness of punishment, that city’s crime rate dropped dramatically.

What do punishment studies tell us about parenting practices? Should we physically punish children to change their behavior? Many psychologists and supporters of nonviolent parenting say No, pointing out four major drawbacks of physical punishment (Gershoff, 2002; Marshall, 2002).

- Punished behavior is suppressed, not forgotten. This temporary state may (negatively) reinforce parents’ punishing behavior. The child swears, the parent swats, the parent hears no more swearing and feels the punishment successfully stopped the behavior. No wonder spanking is a hit with so many U.S. parents of 3- and 4-year-olds—more than 9 in 10 of whom admit spanking their children (Kazdin & Benjet, 2003).

- Punishment teaches discrimination among situations. In operant conditioning, discrimination occurs when we learn that some responses, but not others, will be reinforced. Did the punishment effectively end the child’s swearing? Or did the child simply learn that it’s not okay to swear around the house, but it is okay to swear elsewhere?

- Punishment can teach fear. In operant conditioning, generalization occurs when our responses to similar stimuli are also reinforced. A punished child may associate fear not only with the undesirable behavior but also with the person who delivered the punishment or the place it occurred. Thus, children may learn to fear a punishing teacher and try to avoid school, or may become more anxious (Gershoff et al., 2010). For such reasons, most European countries and most U.S. states now ban hitting children in schools and child-care institutions (www.stophitting.com). In addition, 33 countries outlaw hitting by parents, giving children the same legal protection given to spouses.

- Physical punishment may increase aggression by modeling aggression as a way to cope with problems. Studies find that spanked children are at increased risk for aggression (and depression and low self-esteem). We know, for example, that many aggressive delinquents and abusive parents come from abusive families (Straus et al., 1997). Some researchers have noted a problem with this logic. Well, yes, they’ve said, physically punished children may be more aggressive, for the same reason that people who have undergone psychotherapy are more likely to suffer depression—because they had preexisting problems that triggered the treatments (Larzelere, 2000, 2004). Which is the chicken and which is the egg? Correlations don’t hand us an answer.

If one adjusts for preexisting antisocial behavior, then an occasional single swat or two to misbehaving 2- to 6-year-olds looks more effective (Baumrind et al., 2002; Larzelere & Kuhn, 2005). That is especially so if two other conditions are met:

- The swat is used only as a backup when milder disciplinary tactics, such as a time-out (removing them from reinforcing surroundings), fail.

- The swat is combined with a generous dose of reasoning and reinforcing.

But the debate continues. Other researchers note that frequent spankings predict future aggression—even when studies control for preexisting bad behavior (Taylor et al., 2010).

Parents of delinquent youths may not know how to achieve desirable behaviors without screaming at or hitting their children (Patterson et al., 1982). Training programs can help them translate dire threats (“Apologize right now or I’m taking that cell phone away!”) into positive incentives (“You’re welcome to have your phone back when you apologize”). Stop and think about it. Aren’t many threats of punishment just as forceful, and perhaps more effective, when rephrased positively? Thus, “If you don’t get your homework done, I’m not giving you money for a movie!” would better be phrased as…

In classrooms, too, teachers can give feedback on papers by saying “No, but try this…” and “Yes, that’s it!” Such responses reduce unwanted behavior while reinforcing more desirable alternatives. Remember: Punishment tells you what not to do; reinforcement tells you what to do.

What punishment often teaches, said Skinner, is how to avoid it. The bottom line: Most psychologists now favor an emphasis on reinforcement: Notice people doing something right and affirm them for it.

RETRIEVE + REMEMBER

Question 6.10

Fill in the blanks below with one of the following terms: negative reinforcement (NR), positive punishment (PP), and negative punishment (NP). The first answer, positive reinforcement (PR), is provided for you.

Fill in the blanks below with one of the following terms: negative reinforcement (NR), positive punishment (PP), and negative punishment (NP). The first answer, positive reinforcement (PR), is provided for you.

| Type of Stimulus | Give It | Take It Away |

|---|---|---|

| Desired (for example, a teen’s use of the car): | 1. PR | 2. |

| Undesired/aversive (for example, an insult): | 3. | 4. |

1. PR (positive reinforcement); 2. NP (negative punishment); 3. PP (positive punishment); 4. NR (negative reinforcement)

Skinner’s Legacy

6-9 Why were Skinner’s ideas controversial, and how are educators, managers, and parents applying operant principles?

B. F. Skinner stirred a hornet’s nest with his outspoken beliefs. He repeatedly insisted that external influences (not internal thoughts and feelings) shape behavior. And he urged people to use operant principles to influence others’ behavior at school, work, and home. Knowing that behavior is shaped by its results, he said, we should use rewards to evoke more desirable behavior.

Skinner’s critics objected, saying that by neglecting people’s personal freedom and trying to control their actions, he treated them as less than human. Skinner’s reply: External consequences already control people’s behavior. So why not steer those consequences toward human betterment? Wouldn’t reinforcers be more humane than the punishments used in homes, schools, and prisons? And if it is humbling to think that our history has shaped us, doesn’t this very idea also give us hope that we can shape our future?

Applications of Operant Conditioning

In later chapters we will see how psychologists apply operant conditioning principles to help people reduce high blood pressure or gain social skills. Reinforcement techniques are also at work in schools, workplaces, and homes (Flora, 2004).

AT SCHOOL More than 50 years ago, Skinner and others worked toward a day when “machines and textbooks” would shape learning in small steps, by immediately reinforcing correct responses. Such machines and texts, they said, would revolutionize education and free teachers to focus on each student’s special needs. “Good instruction demands two things,” said Skinner (1989). “Students must be told immediately whether what they do is right or wrong and, when right, they must be directed to the step to be taken next.”

Skinner might be pleased to know that many of his ideals for education are now possible. Teachers used to find it difficult to pace material to each student’s rate of learning, and to provide prompt feedback. Electronic adaptive quizzing (such as the system available with this text) does both. Students move through quizzes at their own pace, according to their own level of understanding. And they get immediate feedback on their efforts.

AT WORK Skinner’s ideas also show up in the workplace. Knowing that reinforcers influence productivity, many organizations have invited employees to share the risks and rewards of company ownership. Others have focused on reinforcing a job well done. Rewards are most likely to increase productivity if the desired performance is well-defined and is achievable. How might managers successfully motivate their employees? Reward specific, achievable behaviors, not vaguely defined “merit.”

Operant conditioning also reminds us that reinforcement should be immediate. IBM legend Thomas Watson understood. When he observed an achievement, he wrote the employee a check on the spot (Peters & Waterman, 1982). But rewards don’t have to be material, or lavish. An effective manager may simply walk the floor and sincerely praise people for good work, or write notes of appreciation for a completed project. As Skinner said, “How much richer would the whole world be if the reinforcers in daily life were more effectively contingent on productive work?”

AT HOME As we have seen, parents can learn from operant conditioning practices. Parent-training researchers remind us that by saying “Get ready for bed” and then caving in to protests or defiance, parents reinforce such whining and arguing. Exasperated, they may then yell or make threatening gestures. When the child, now frightened, obeys, that in turn reinforces the parents’ angry behavior. Over time, a destructive parent-child relationship develops.

To disrupt this cycle, parents should remember the basic rule of shaping: Notice people doing something right and affirm them for it. Give children attention and other reinforcers when they are behaving well (Wierson & Forehand, 1994). Target a specific behavior, reward it, and watch it increase. When children misbehave or are defiant, do not yell at or hit them. Simply explain what they did wrong and give them a time-out.

Operant conditioning principles can also help us change our own behaviors. For some tips, see Close-Up: Using Operant Conditioning to Build Your Own Strengths.

RETRIEVE + REMEMBER

Question 6.11

Ethan constantly misbehaves at preschool even though his teacher scolds him repeatedly. Why does Ethan’s misbehavior continue, and what can his teacher do to stop it?

Ethan constantly misbehaves at preschool even though his teacher scolds him repeatedly. Why does Ethan’s misbehavior continue, and what can his teacher do to stop it?

If Ethan is seeking attention, the teacher’s scolding may be reinforcing rather than punishing. To change Ethan’s behavior, his teacher could offer reinforcement (such as praise) each time he behaves well. The teacher might encourage Ethan toward increasingly appropriate behavior through shaping, or by rephrasing rules as rewards instead of punishments. (“You can have a snack if you play nicely with the other children” [reward] rather than “You will not get a snack if you misbehave!” [punishment]).

Contrasting Classical and Operant Conditioning

6-10 How does classical conditioning differ from operant conditioning?

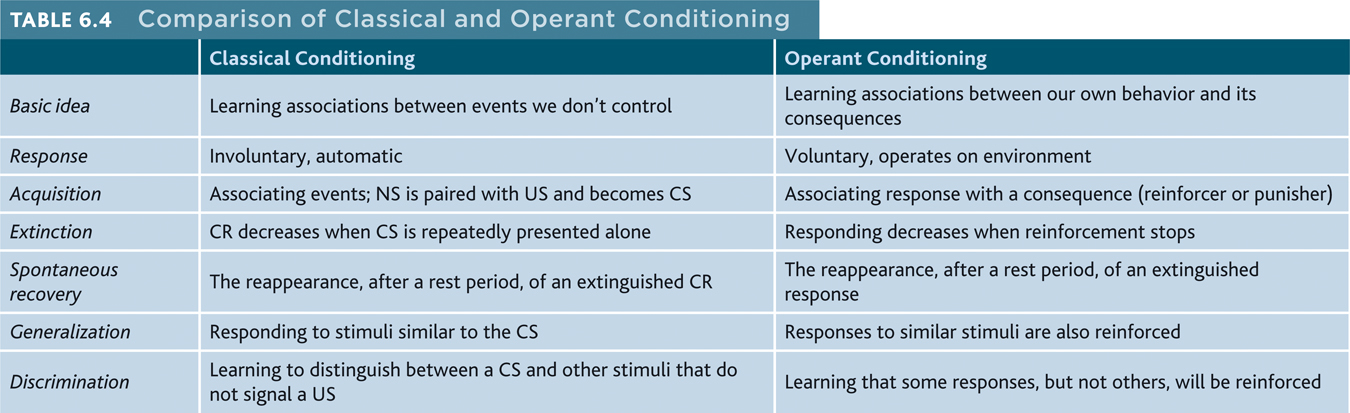

Both classical and operant conditioning are forms of associative learning (TABLE 6.4). In both, we acquire behaviors that may later become extinct and then spontaneously reappear. We often generalize our responses but learn to discriminate among different stimuli.

Classical and operant conditioning also differ: Through classical conditioning, we associate different events that we don’t control, and we respond automatically (respondent behaviors). Through operant conditioning, we link our own behaviors that act on our environment to produce rewarding or punishing events (operant behaviors) with their consequences.

C L O S E - U P

Using Operant Conditioning to Build Your Own StrengthsWant to stop smoking? Eat less? Study or exercise more? To reinforce your own desired behaviors and extinguish the undesired ones, psychologists suggest taking five steps.

- State your goal in measurable terms and announce it. You might, for example, aim to boost your study time by an hour a day and share that goal with friends.

- Decide how, when, and where you will work toward your goal. Take time to plan. Those who list specific steps showing how they will reach their goals more often achieve them (Gollwitzer & Oettingen, 2012).

- Monitor how often you engage in your desired behavior. You might log your current study time, noting under what conditions you do and don’t study. (When I began writing textbooks, I logged how I spent my time each day and was amazed to discover how much time I was wasting.)

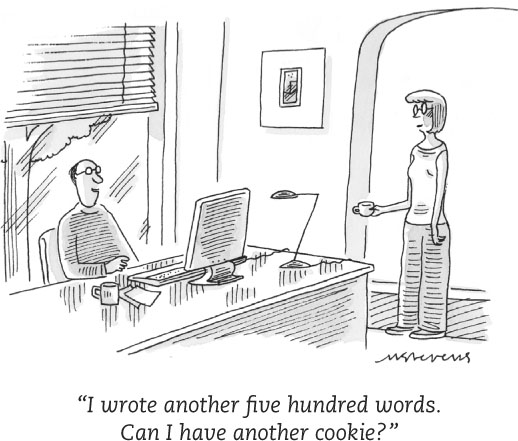

© The New Yorker Collection, 2001, Mick Stevens from cartoonbank.com. All Rights Reserved.

© The New Yorker Collection, 2001, Mick Stevens from cartoonbank.com. All Rights Reserved. - Reinforce the desired behavior. To increase your study time, give yourself a reward (a snack or some activity you enjoy) only after you finish your extra hour of study. Agree with your friends that you will join them for weekend activities only if you have met your realistic weekly studying goal.

- Reduce the rewards gradually. As your new behaviors become habits, give yourself a mental pat on the back instead of a cookie.

As we shall next see, our biology and our thought processes influence both classical and operant conditioning.

RETRIEVE + REMEMBER

Question 6.12

Salivating in response to a tone paired with food is a(n) ________ behavior; pressing a bar to obtain food is a(n) _________ behavior.

Salivating in response to a tone paired with food is a(n) ________ behavior; pressing a bar to obtain food is a(n) _________ behavior.

respondent; operant