7.2 Operant Conditioning: Reinforcements from the Environment

The study of classical conditioning is the study of behaviors that are reactive. Most animals don’t voluntarily salivate or feel spasms of anxiety; rather, animals exhibit these responses involuntarily during the conditioning process. But we also engage in voluntary behaviors in order to obtain rewards and avoid punishment. Operant conditioning is a type of learning in which the consequences of an organism’s behavior determine whether it will be repeated in the future. The study of operant conditioning is the exploration of behaviors that are active.

operant conditioning

A type of learning in which the consequences of an organism’s behavior determine whether it will be repeated in the future.

The Development of Operant Conditioning: The Law of Effect

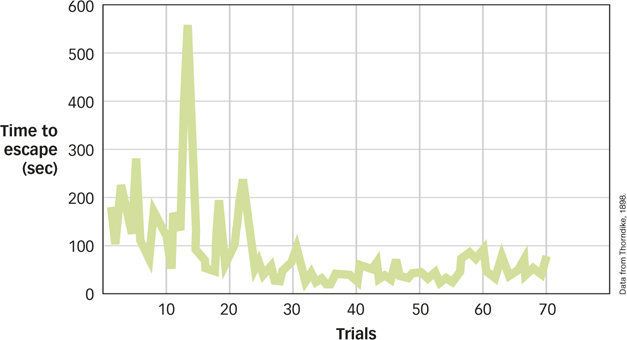

The study of how active behavior affects the environment began at about the same time as classical conditioning. In the 1890s, Edward Thorndike studied instrumental behaviors—that is, behavior that required an organism to do something, solve a problem, or otherwise manipulate elements of its environment (Thorndike, 1898). Some of Thorndike’s experiments used a puzzle box, which was a wooden crate with a door that would open when a concealed lever was moved in the right way (see FIGURE 7.4). A hungry cat placed in a puzzle box would try various behaviors to get out—

law of effect

Behaviors that are followed by a “satisfying state of affairs” tend to be repeated and those that produce an “unpleasant state of affairs” are less likely to be repeated.

217

What is the relationship between behavior and reward?

Such learning is very different from classical conditioning. Remember that in classical conditioning experiments, the US occurred on every training trial no matter what the animal did. Pavlov delivered food to the dog whether it salivated or not. But in Thorndike’s work, the behavior of the animal determined what happened next. If the behavior was “correct” (i.e., the latch was triggered), the animal was rewarded with food. Incorrect behaviors produced no results, and the animal was stuck in the box until it performed the correct behavior. Although different from classical conditioning, Thorndike’s work resonated with most behaviorists at the time: It was still observable, quantifiable, and free from explanations involving the mind (Galef, 1998).

B. F. Skinner: The Role of Reinforcement and Punishment

Several decades after Thorndike’s work, B. F. Skinner (1904–

operant behavior

Behavior that an organism produces that has some impact on the environment.

218

Skinner’s approach to the study of learning focused on reinforcement and punishment. These terms, which have commonsense connotations, have particular meanings in psychology, in terms of their effects on behavior. Therefore, a reinforcer is any stimulus or event that functions to increase the likelihood of the behavior that led to it, whereas a punisher is any stimulus or event that functions to decrease the likelihood of the behavior that led to it.

reinforcer

Any stimulus or event that functions to increase the likelihood of the behavior that led to it.

punisher

Any stimulus or event that functions to decrease the likelihood of the behavior that led to it.

Whether a particular stimulus acts as a reinforcer or a punisher depends in part on whether it increases or decreases the likelihood of a behavior. Presenting food is usually reinforcing and produces an increase in the behavior that led to it; removing food is often punishing and leads to a decrease in the behavior. Turning on a device that causes an electric shock is typically punishing (and decreases the behavior that led to it); turning it off is rewarding (and increases the behavior that led to it).

To keep these possibilities distinct, Skinner used the term positive for situations in which a stimulus was presented and negative for situations in which it was removed. Consequently, there is positive reinforcement (where a rewarding stimulus is presented) and negative reinforcement (where an unpleasant stimulus is removed), as well as positive punishment (where an unpleasant stimulus is administered) and negative punishment (where a rewarding stimulus is removed). Here the words positive and negative mean, respectively, something that is added or something that is taken away, but the terms do not mean “good” or “bad” as they do in everyday speech. As you can see from TABLE 7.1, positive and negative reinforcement increase the likelihood of the behavior; positive and negative punishment decrease the likelihood of the behavior.

|

|

Increases the Likelihood of Behavior |

Decreases the Likelihood of Behavior |

|---|---|---|

|

Stimulus is presented |

Positive reinforcement |

Positive punishment |

|

Stimulus is removed |

Negative reinforcement |

Negative punishment |

These distinctions can be confusing at first; after all, “negative reinforcement” and “punishment” both sound like they should be “bad” and produce the same type of behavior. However, negative reinforcement involves something pleasant; it’s the removal of something unpleasant, like a shock, and the absence of a shock is indeed pleasant.

Why is reinforcement more constructive than punishment in learning desired behavior?

Reinforcement is generally more effective than punishment in promoting learning. There are many reasons (Gershoff, 2002), but one reason is this: Punishment signals that an unacceptable behavior has occurred, but it doesn’t specify what should be done instead. Spanking a young child for starting to run into a busy street certainly stops the behavior, but it doesn’t promote any kind of learning about the desired behavior.

Primary and Secondary Reinforcement and Punishment

Reinforcers and punishers often gain their functions from basic biological mechanisms. A pigeon that pecks at a target in a Skinner box is usually reinforced with food pellets, just as an animal that learns to escape a mild electric shock has avoided the punishment of tingly paws. Food, comfort, shelter, or warmth are examples of primary reinforcers because they help satisfy biological needs. However, the vast majority of reinforcers or punishers in our daily lives have little to do with biology: Verbal approval, a bronze trophy, or money all serve powerful reinforcing functions, yet none of them taste very good or help keep you warm at night.

219

These secondary reinforcers derive their effectiveness from their associations with primary reinforcers through classical conditioning. For example, money starts out as a neutral CS that, through its association with primary USs like acquiring food or shelter, takes on a conditioned emotional element. Flashing lights, originally a neutral CS, acquire powerful negative elements through association with a speeding ticket and a fine.

Immediate versus Delayed Reinforcement and Punishment

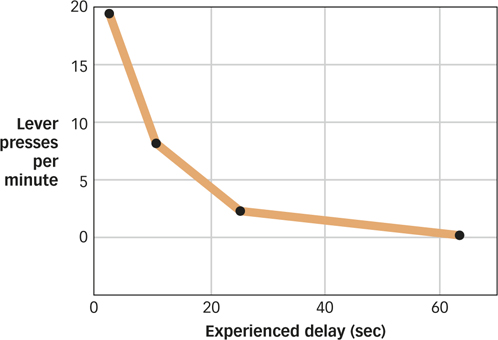

A key determinant of the effectiveness of a reinforcer is the amount of time between the occurrence of a behavior and the reinforcer: The more time that elapses, the less effective the reinforcer (Lattal, 2010; Renner, 1964). This was dramatically illustrated in experiments in which food reinforcers were given at varying times after the rat pressed the lever (Dickinson, Watt, & Griffiths, 1992). Delaying reinforcement by even a few seconds led to a reduction in the number of times the rat subsequently pressed the lever, and extending the delay to a minute rendered the food reinforcer completely ineffective (see FIGURE 7.7). The most likely explanation for this effect is that delaying the reinforcer made it difficult for the rats to figure out exactly what behavior they needed to perform in order to obtain it. In the same way, parents who wish to reinforce their children for playing quietly with a piece of candy should provide the candy while the child is still playing quietly; waiting until later when the child may be engaging in other behaviors—

How does the concept of delayed reinforcement relate to difficulties with quitting smoking?

The greater potency of immediate versus delayed reinforcers may help us to appreciate why it can be difficult to engage in behaviors that have long-

220

The Basic Principles of Operant Conditioning

After establishing how reinforcement and punishment produced learned behavior, Skinner and other scientists began to expand the parameters of operant conditioning. Let’s look at some of these basic principles of operant conditioning.

Culture & Community: Are there cultural differences in reinforcers?

Are there cultural differences in reinforcers? Operant approaches that use positive reinforcement have been applied extensively in everyday settings such as behavior therapy (see Treatment of Psychological Disorders, pp. 476–

Recently, 750 high school students from America, Australia, Tanzania, Denmark, Honduras, Korea, and Spain were surveyed in order to evaluate possible cross-

These results should be taken with a grain of salt because the researchers did not control for variables other than culture that could influence the results, such as economic status. Nonetheless, the study suggests that cultural differences should be considered in the design of programs or interventions that rely on the use of reinforcers to influence the behavior of individuals who come from different cultures.

Discrimination and Generalization

Operant conditioning shows both discrimination and generalization effects similar to those we saw with classical conditioning. For example, in one study, researchers presented either an Impressionist painting by Monet or a Cubist painting by Picasso (Watanabe, Sakamoto, & Wakita, 1995). Participants in the experiment were only reinforced if they responded when the appropriate painting was presented. After training, the participants discriminated appropriately; those trained with the Monet painting responded when other paintings by Monet were presented, but not when other Cubist paintings by Picasso were shown; those trained with a Picasso painting showed the opposite behavior. What’s more, the research participants showed that they could generalize: Those trained with Monet responded appropriately when shown other Impressionist paintings, and the Picasso-

221

Tate Gallery, London/Art Resource, NY

Extinction

As in classical conditioning, operant behavior undergoes extinction when the reinforcements stop. Pigeons cease pecking at a key if food is no longer presented following the behavior. You wouldn’t put more money into a vending machine if it failed to give you its promised candy bar or soda. On the surface, extinction of operant behavior looks like that of classical conditioning.

However, there is an important difference. As noted, in classical conditioning, the US occurs on every trial no matter what the organism does. In operant conditioning, the reinforcements only occur when the proper response has been made, and they don’t always occur even then. Not every trip into the forest produces nuts for a squirrel, auto salespeople don’t sell to everyone who takes a test drive, and researchers run many experiments that do not work out and never get published. Yet these behaviors don’t weaken and gradually extinguish. Extinction is a bit more complicated in operant conditioning than in classical conditioning because it depends, in part, on how often reinforcement is received. In fact, this principle is an important cornerstone of operant conditioning that we’ll examine next.

How is the concept of extinction different in operant conditioning versus classical conditioning?

Schedules of Reinforcement

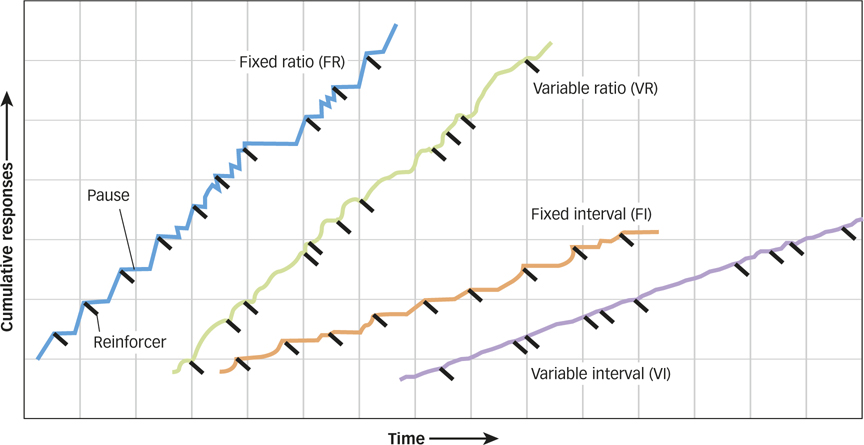

One day, Skinner was laboriously hand-

Interval Schedules. Under a fixed-interval schedule (FI), reinforcers are presented at fixed-

fixed-interval schedule (FI)

An operant conditioning principle in which reinforcers are presented at fixed-

222

Under a variable-interval schedule (VI), a behavior is reinforced based on an average time that has expired since the last reinforcement. For example, on a 2-

variable-interval schedule (VI)

An operant conditioning principle in which behavior is reinforced based on an average time that has expired since the last reinforcement.

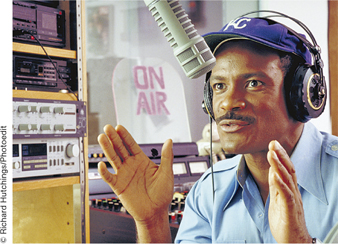

How does a radio station use scheduled reinforcements to keep you listening?

Both fixed-

How do ratio schedules work to keep you spending your money?

Ratio Schedules. Under a fixed-ratio schedule (FR), reinforcement is delivered after a specific number of responses have been made. One schedule might present reinforcement after every fourth response, a different schedule might present reinforcement after every 20 responses; the special case of presenting reinforcement after each response is called continuous reinforcement. There are many situations in which people are reinforced on a fixed-

fixed-ratio schedule (FR)

An operant conditioning principle in which reinforcement is delivered after a specific number of responses have been made.

223

Under a variable-ratio schedule (VR), the delivery of reinforcement is based on a particular average number of responses. For example, slot machines in a modern casino pay off on variable-

variable-ratio schedule (VR)

An operant conditioning principle in which the delivery of reinforcement is based on a particular average number of responses.

Not surprisingly, variable-

When schedules of reinforcement provide intermittent reinforcement, when only some of the responses made are followed by reinforcement, they produce behavior that is much more resistant to extinction than a continuous reinforcement schedule. One way to think about this effect is to recognize that the more irregular and intermittent a schedule is, the more difficult it becomes for an organism to detect when the behavior has actually been placed on the road to extinction. For example, if you’ve just put a dollar into a soda machine that, unbeknownst to you, is broken, no soda comes out. Because you’re used to getting your sodas on a continuous reinforcement schedule—

intermittent reinforcement

An operant conditioning principle in which only some of the responses made are followed by reinforcement.

intermittent reinforcement effect

The fact that operant behaviors that are maintained under intermittent reinforcement schedules resist extinction better than those maintained under continuous reinforcement.

How can operant conditioning produce complex behaviors?

shaping

Learning that results from the reinforcement of successive steps to a final desired behavior.

Shaping through Successive Approximations

Have you ever been to AquaLand and wondered how the dolphins learn to jump up in the air, twist around, splash back down, do a somersault, and then jump through a hoop, all in one smooth motion? Well, they don’t. At least not all at once. Rather, elements of their behavior are shaped over time until the final product looks like one smooth motion.

Behavior rarely occurs in fixed frameworks in which a stimulus is presented and then an organism has to engage in some activity or another. Most of our behaviors are the result of shaping, learning that results from the reinforcement of successive steps to a final desired behavior. The outcomes of one set of behaviors shape the next set of behaviors, whose outcomes shape the next set of behaviors, and so on.

Skinner noted that if you put a rat in a Skinner box and wait for it to press the bar, you could end up waiting a very long time: Bar pressing just isn’t very high in a rat’s natural hierarchy of responses. However, it is relatively easy to shape bar pressing. Wait until the rat turns in the direction of the bar, and then deliver a food reward. This will reinforce turning toward the bar, making such a movement more likely. Now wait for the rat to take a step toward the bar before delivering food; this will reinforce moving toward the bar. After the rat walks closer to the bar, wait until it touches the bar before presenting the food. Notice that none of these behaviors is the final desired behavior (reliably pressing the bar). Rather, each behavior is a successive approximation to the final product, or a behavior that gets incrementally closer to the overall desired behavior. In the dolphin example—

224

Superstitious Behavior

Everything we’ve discussed so far suggests that one of the keys to establishing reliable operant behavior is the correlation between an organism’s response and the occurrence of reinforcement. As you read in the Methods in Psychology chapter, however, just because two things are correlated (i.e., they tend to occur together in time and space) doesn’t imply that there is causality (i.e., the presence of one reliably causes the other to occur).

How would a behaviorist explain superstitions?

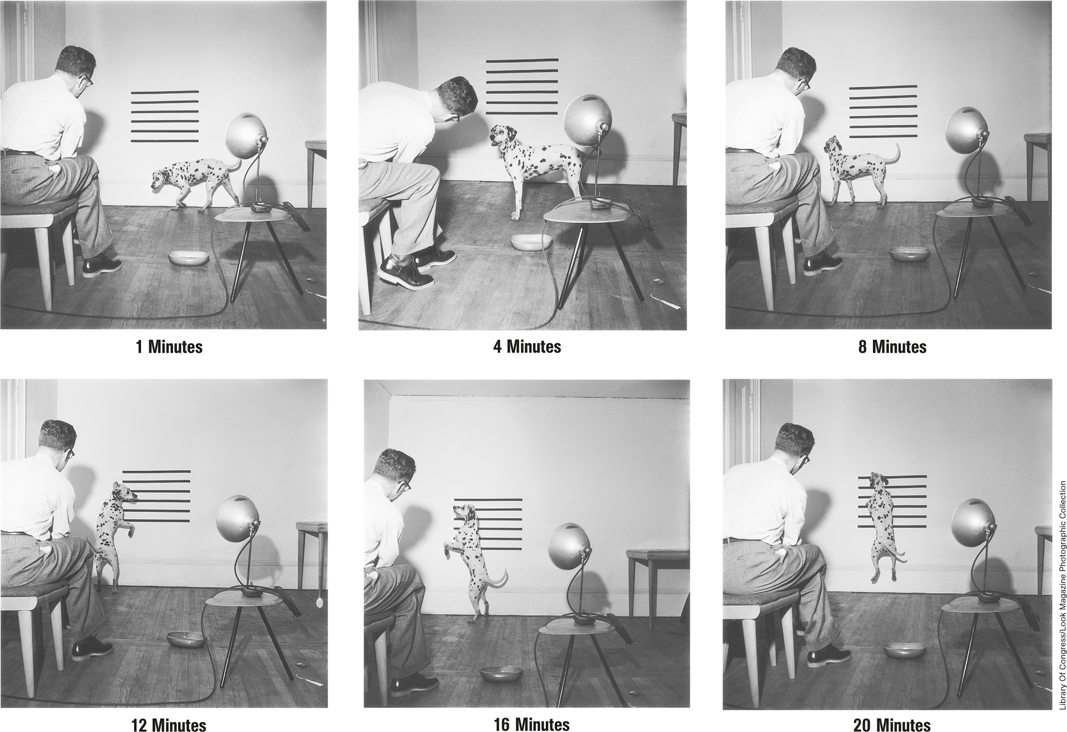

Skinner (1948) designed an experiment that illustrates this distinction. He put several pigeons in Skinner boxes, set the food dispenser to deliver food every 15 seconds, and left the birds to their own devices. Later, he returned and found the birds engaging in odd, idiosyncratic behaviors, such as pecking aimlessly in a corner or turning in circles. He referred to these behaviors as “superstitious” and offered a behaviorist analysis of their occurrence. A pigeon that just happened to have pecked randomly in the corner when the food showed up had connected the delivery of food to that behavior.

225

Because this pecking behavior was reinforced by the delivery of food, the pigeon was likely to repeat it. Now pecking in the corner was more likely to occur, and it was more likely to be reinforced 15 seconds later when the food appeared again. Skinner’s pigeons acted as though there was a causal relationship between their behaviors and the appearance of food when it was merely an accidental correlation.

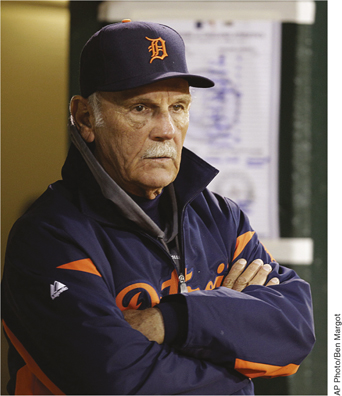

Although some researchers questioned Skinner’s characterization of these behaviors as superstitious (Staddon & Simmelhag, 1971), later studies have shown that people, like pigeons, often behave as though there’s a correlation between their responses and reward when in fact the connection is merely accidental (Bloom et al., 2007; Mellon, 2009; Ono, 1987; Wagner & Morris, 1987). For example, baseball players who hit several home runs on a day when they happened not to have showered are likely to continue that tradition, laboring under the belief that the accidental correlation between poor personal hygiene and a good day at bat is somehow causal. This “stench causes home runs” hypothesis is just one of many examples of human superstitions (Gilbert et al., 2000; Radford & Radford, 1949).

A Deeper Understanding of Operant Conditioning

To behaviorists such as Watson and Skinner, an organism behaved in a certain way as a response to stimuli in the environment, not because there was any wanting, wishing, or willing by the animal in question. However, some research on operant conditioning digs deeper into the underlying mechanisms that produce the familiar outcomes of reinforcement. Let’s examine three elements that expand our view of operant conditioning: the cognitive, neural, and evolutionary elements of operant conditioning.

The Cognitive Elements of Operant Conditioning

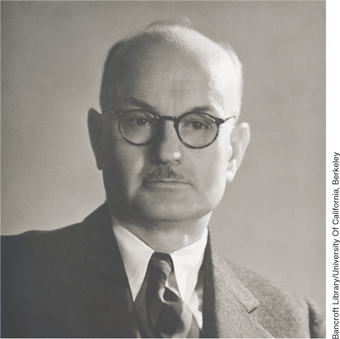

Edward Chace Tolman (1886–

Tolman’s ideas may remind you of the Rescorla–

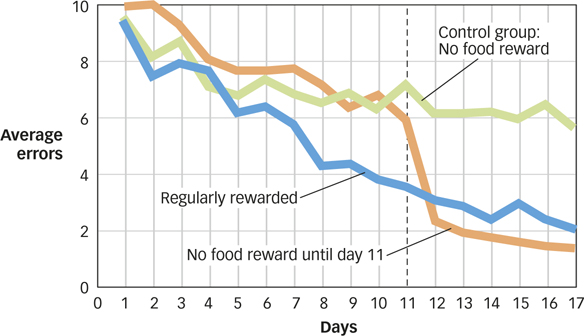

Latent Learning and Cognitive Maps. In latent learning, something is learned, but it is not manifested as a behavioral change until sometime in the future. Latent learning can easily be established in rats and occurs without any obvious reinforcement, a finding that posed a direct challenge to the then-

latent learning

Something is learned, but it is not manifested as a behavioral change until sometime in the future.

Tolman gave three groups of rats access to a complex maze every day for over 2 weeks. The rats in the control group never received any reinforcement for navigating the maze. They were simply allowed to run around until they reached the goal box at the end of the maze. In FIGURE 7.9 you can see that over the 2 weeks of the study, the control group rats (in green) got a little better at finding their way through the maze, but not by much. A second group of rats (in blue) received regular reinforcements; when they reached the goal box, they found a small food reward there. Not surprisingly, these rats showed clear learning. A third group (orange) was treated exactly like the control group for the first 10 days and then rewarded for the last 7 days. For the first 10 days, these rats behaved like those in the control group. However, during the final 7 days, they behaved like the rats that had been reinforced every day. Clearly, the rats in this third group had learned a lot about the maze and the location of the goal box during those first 10 days even though they had not received any reinforcements for their behavior. In other words, they showed evidence of latent learning.

226

These results suggested to Tolman that his rats had developed a cognitive map, a mental representation of the physical features of the environment (Tolman & Honzik, 1930b; Tolman, Ritchie, & Kalish, 1946). Support for this idea was obtained in a clever experiment, in which Tolman trained rats in a maze and then changed the maze—

cognitive map

A mental representation of the physical features of the environment.

What are cognitive maps, and why are they a challenge to behaviorism?

Learning to Trust: For Better or Worse. Cognitive factors also played a key role in an experiment examining learning and brain activity (using fMRI) in people who played a “trust” game with a fictional partner (Delgado, Frank, & Phelps, 2005). On each trial, a participant could either keep a $1 reward or transfer the reward to a partner, who would receive $3. The partner could then either keep the $3 or share half of it with the participant. When playing with a partner who was willing to share the reward, the participant would be better off transferring the money, but when playing with a partner who did not share, the participant would be better off keeping the $1. Participants in such experiments typically learn who is trustworthy on the basis of trial-

In the study by Delgado et al., participants were given detailed descriptions of their partners that either portrayed the partners as trustworthy, neutral, or suspect. Even though during the game, all three of the partners shared equally often, the participants’ cognitions about their partners had powerful effects. Participants transferred more money to the trustworthy partner than to the others, essentially ignoring the trial-

The Neural Elements of Operant Conditioning

The first hint of how specific brain structures might contribute to the process of reinforcement came from James Olds and his associates, who inserted tiny electrodes into different parts of a rat’s brain and allowed the animal to control electric stimulation of its own brain by pressing a bar. They discovered that some brain areas produced what appeared to be intensely positive experiences: The rats would press the bar repeatedly to stimulate these structures, sometimes ignoring food, water, and other life-

How do specific brain structures contribute to the process of reinforcement?

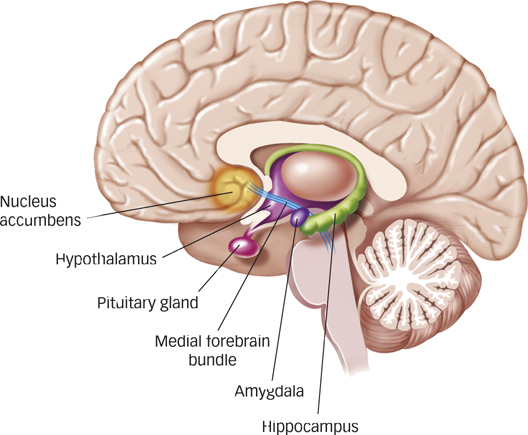

In the years since these early studies, researchers have identified a number of structures and pathways in the brain that deliver rewards through stimulation (Wise, 1989, 2005). The neurons in the medial forebrain bundle, a pathway that meanders its way from the midbrain through the hypothalamus into the nucleus accumbens, are the most susceptible to stimulation that produces pleasure. This is not surprising because psychologists have identified this bundle of cells as crucial to behaviors that clearly involve pleasure, such as eating, drinking, and engaging in sexual activity. Second, the neurons all along this pathway and especially those in the nucleus accumbens itself are all dopaminergic (i.e., they secrete the neurotransmitter dopamine). Remember from the Neuroscience and Behavior chapter that higher levels of dopamine in the brain are usually associated with positive emotions. In recent years, several competing hypotheses about the precise role of dopamine have emerged, including the idea that dopamine is more closely linked with the expectation of reward than with reward itself (Fiorillo, Newsome, & Schultz, 2008; Schultz, 2006, 2007), or that dopamine is more closely associated with wanting or even craving something rather than simply liking it (Berridge, 2007). Whichever view turns out to be correct, dopamine seems to play a key role in how we process reward. (For more on the relationship between dopamine and Parkinson’s, see the Hot Science box: Dopamine and Reward Learning in Parkinson’s Disease.)

227

Hot Science: Dopamine and Reward Learning in Parkinson’s Disease

Dopamine and Reward Learning in Parkinson’s Disease

Many of us have relatives or friends who have been affected by Parkinson’s disease, a movement disorder that involves loss of dopamine-

Research suggests that dopamine plays an important role in prediction error: the difference between the actual reward received versus the amount of predicted or expected reward. For example, when an animal presses a lever and receives an unexpected food reward, a positive prediction error occurs (a better than expected outcome), and the animal learns to press the lever again. By contrast, when an animal expects to receive a reward by pressing a lever but does not receive it, a negative prediction error occurs (a worse than expected outcome), and the animal will subsequently be less likely to press the lever again. Prediction error can thus serve as a kind of “teaching signal” that helps the animal to learn to behave in a way that maximizes reward. Intriguingly, dopamine neurons in the reward centers of a monkey’s brain show increased activity when the monkey receives unexpected juice rewards and decreased activity when the monkey does not receive expected juice rewards, suggesting that dopamine neurons play an important role in generating the prediction error (Schultz, 2006, 2007; Schultz, Dayan, & Montague, 1997). Neuroimaging studies show that human brain regions involved in reward-

So, how do these findings relate to people with Parkinson’s disease? Several studies report that reward-

More studies will be needed to unravel the complex relations among dopamine, reward prediction error, learning, and Parkinson’s disease, but the studies to date suggest that such research should have important practical as well as scientific implications.

228

The Evolutionary Elements of Operant Conditioning

As you’ll recall, classical conditioning has an adaptive value that has been fine-

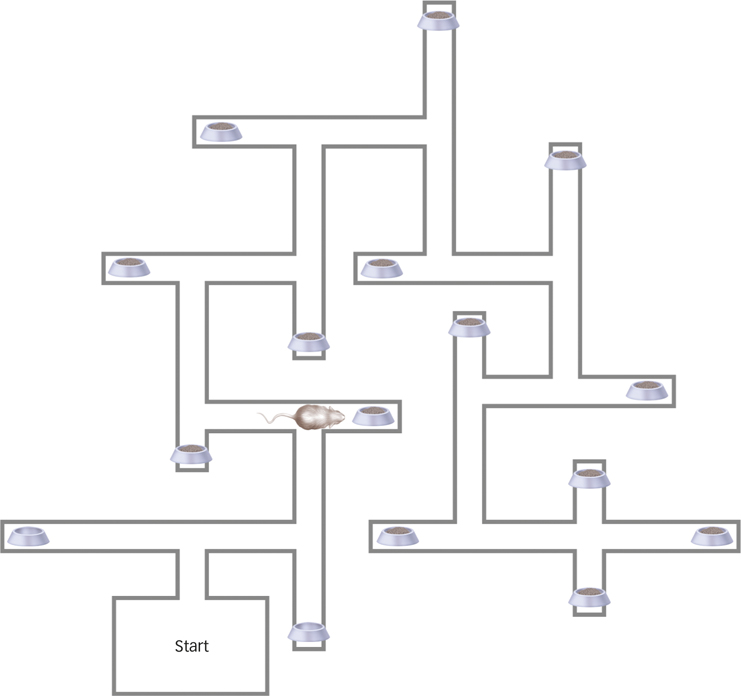

What was puzzling from a behaviorist perspective makes sense when viewed from an evolutionary perspective. Rats are foragers, and like all foraging species, they have evolved a highly adaptive strategy for survival. They move around in their environments looking for food. If they find it somewhere, they eat it (or store it) and then go look somewhere else for more. So, if the rat just found food in the right arm of a T maze, the obvious place to look next time is the left arm. The rat knows that there isn’t any more food in the right arm because it just ate the food it found there! Indeed, given the opportunity to explore a complex environment like the multiarm maze shown in FIGURE 7.12, rats will systematically go from arm to arm collecting food, rarely returning to an arm they have previously visited (Olton & Samuelson, 1976).

What explains a rat’s behavior in a T maze?

229

Millard H. Sharp/Science Source

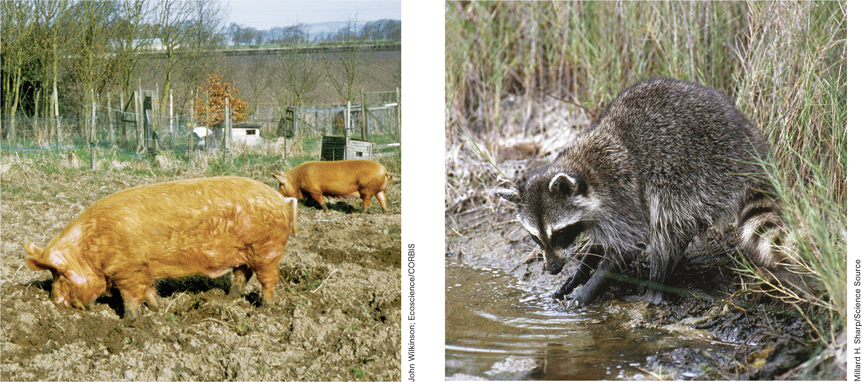

Two of Skinner’s former students, Keller Breland and Marian Breland, were among the first researchers to discover that it wasn’t just rats in T mazes that presented a problem for behaviorists (Breland & Breland, 1961). The Brelands, who made a career out of training animals for commercials and movies, often used pigs because pigs are surprisingly good at learning all sorts of tricks. However, the Brelands discovered that it was extremely difficult to teach a pig the simple task of dropping coins in a box. Instead of depositing the coins, the pigs persisted in rooting with them as if they were digging them up in soil, tossing them in the air with their snouts, and pushing them around. The Brelands tried to train raccoons at the same task, with different but equally dismal results. The raccoons spent their time rubbing the coins between their paws instead of dropping them in the box. Having learned the association between the coins and food, the animals began to treat the coins as stand-

SUMMARY QUIZ [7.2]

Question 7.5

| 1. | Which of the following is NOT an accurate statement concerning operant conditioning? |

- Actions and outcomes are critical to operant conditioning.

- Operant conditioning involves the reinforcement of behavior.

- Complex behaviors cannot be accounted for by operant conditioning.

- Operant conditioning has roots in evolutionary behavior.

c.

Question 7.6

| 2. | Which of the following mechanisms have no role in Skinner’s approach to behavior? |

- cognitive

- neural

- evolutionary

- all of the above

230

d.

Question 7.7

| 3. | Latent learning provides evidence for a cognitive element in operant conditioning because |

- it occurs without any obvious reinforcement.

- it requires both positive and negative reinforcement.

- it points toward the operation of a neural reward center.

- it depends on a stimulus–

response relationship.

a.