22.1 Skinner's Experiments

22-

B. F. Skinner (1904–

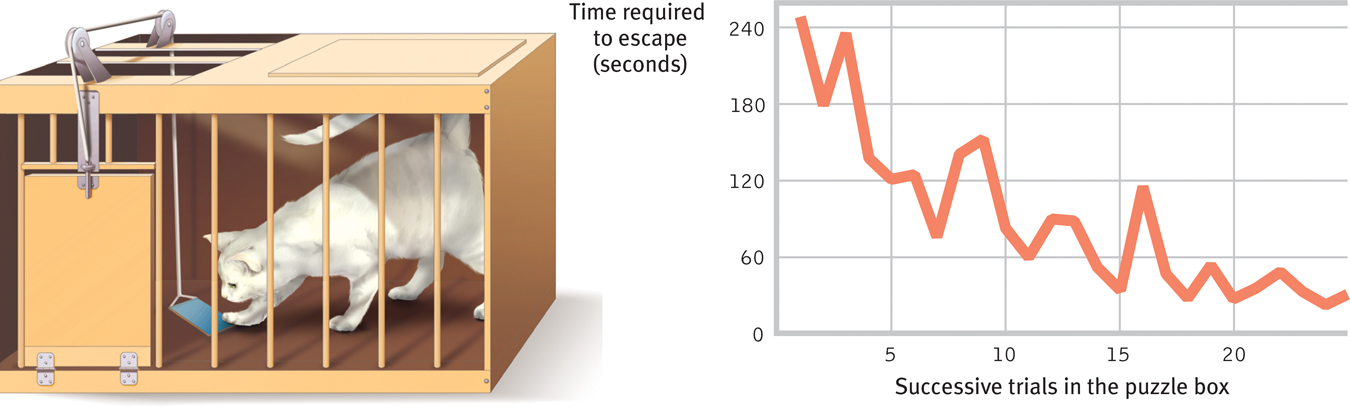

Figure 22.1

Figure 22.1Cat in a puzzle box Thorndike used a fish reward to entice cats to find their way out of a puzzle box (left) through a series of maneuvers. The cats’ performance tended to improve with successive trials (right), illustrating Thorndike’s law of effect. (Adapted from Thorndike, 1898.)

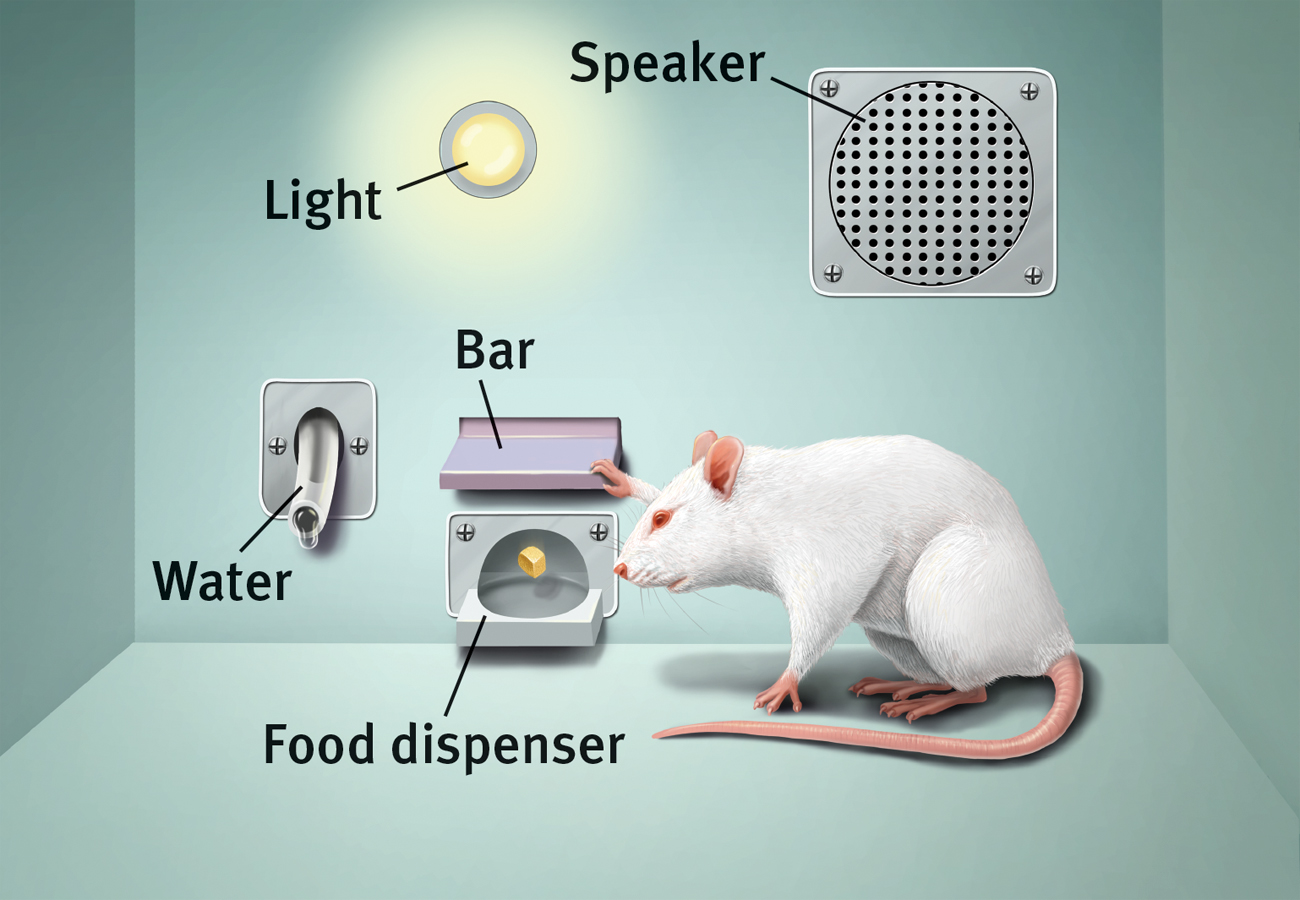

For his pioneering studies, Skinner designed an operant chamber, popularly known as a Skinner box (FIGURE 22.2). The box has a bar (a lever) that an animal presses—

Figure 22.2

Figure 22.2A Skinner box Inside the box, the rat presses a bar for a food reward. Outside, a measuring device (not shown here) records the animal’s accumulated responses.

291

Shaping Behavior

Imagine that you wanted to condition a hungry rat to press a bar. Like Skinner, you could tease out this action with shaping, gradually guiding the rat’s actions toward the desired behavior. First, you would watch how the animal naturally behaves, so that you could build on its existing behaviors. You might give the rat a bit of food each time it approaches the bar. Once the rat is approaching regularly, you would give the food only when it moves close to the bar, then closer still. Finally, you would require it to touch the bar to get food. With this method of successive approximations, you reward responses that are ever closer to the final desired behavior, and you ignore all other responses. By making rewards contingent on desired behaviors, researchers and animal trainers gradually shape complex behaviors.

Shaping can also help us understand what nonverbal organisms perceive. Can a dog distinguish red and green? Can a baby hear the difference between lower-

Skinner noted that we continually reinforce and shape others’ everyday behaviors, though we may not mean to do so. Billy’s whining annoys his parents, for example, but consider how they typically respond:

Billy: Could you tie my shoes?

Father: (Continues reading paper.)

Billy: Dad, I need my shoes tied.

Father: Uh, yeah, just a minute.

Billy: DAAAAD! TIE MY SHOES!

Father: How many times have I told you not to whine? Now, which shoe do we do first?

Billy’s whining is reinforced, because he gets something desirable—

292

Or consider a teacher who pastes gold stars on a wall chart beside the names of children scoring 100 percent on spelling tests. As everyone can then see, some children consistently do perfect work. The others, who may have worked harder than the academic all-

Types of Reinforcers

22-

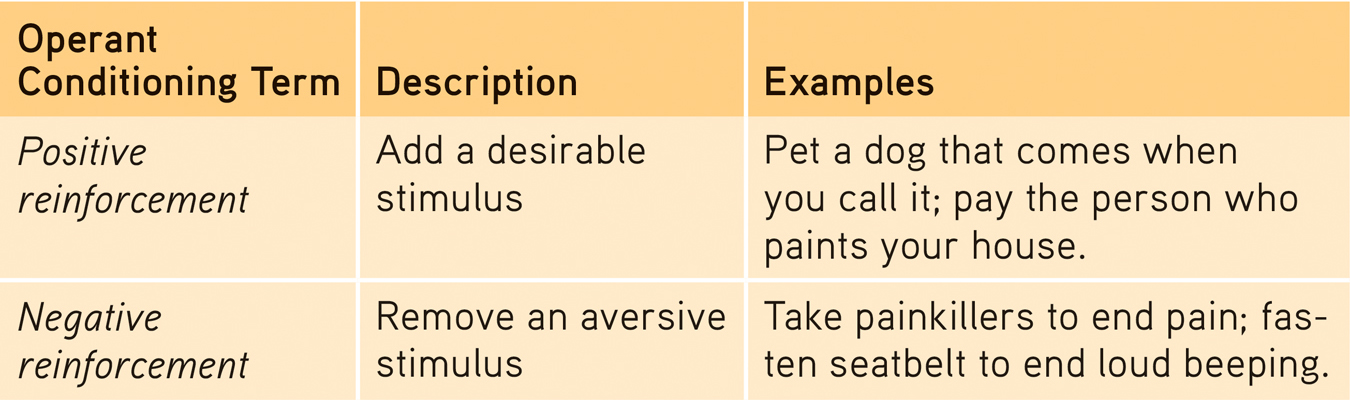

Until now, we’ve mainly been discussing positive reinforcement, which strengthens responding by presenting a typically pleasurable stimulus after a response. But, as we saw in the whining Billy story, there are two basic kinds of reinforcement (TABLE 22.1). Negative reinforcement strengthens a response by reducing or removing something negative. Billy’s whining was positively reinforced, because Billy got something desirable—

Table 22.1

Table 22.1Ways to Increase Behavior

RETRIEVAL PRACTICE

- How is operant conditioning at work in this cartoon?

The baby negatively reinforces her parents when she stops crying once they grant her wish. Her parents positively reinforce her cries by letting her sleep with them.

Sometimes negative and positive reinforcement coincide. Imagine a worried student who, after goofing off and getting a bad exam grade, studies harder for the next exam. This increased effort may be negatively reinforced by reduced anxiety, and positively reinforced by a better grade. We reap the rewards of escaping the aversive stimulus, which increases the chances that we will repeat our behavior. The point to remember: Whether it works by reducing something aversive, or by providing something desirable, reinforcement is any consequence that strengthens behavior.

Primary and Conditioned ReinforcersGetting food when hungry or having a painful headache go away is innately satisfying. These primary reinforcers are unlearned. Conditioned reinforcers, also called secondary reinforcers, get their power through learned association with primary reinforcers. If a rat in a Skinner box learns that a light reliably signals a food delivery, the rat will work to turn on the light (see Figure 22.2). The light has become a conditioned reinforcer. Our lives are filled with conditioned reinforcers—

293

Immediate and Delayed ReinforcersLet’s return to the imaginary shaping experiment in which you were conditioning a rat to press a bar. Before performing this “wanted” behavior, the hungry rat will engage in a sequence of “unwanted” behaviors—

Unlike rats, humans do respond to delayed reinforcers: the paycheck at the end of the week, the good grade at the end of the semester, the trophy at the end of the season. Indeed, to function effectively we must learn to delay gratification. In laboratory testing, some 4-

To our detriment, small but immediate consequences (the enjoyment of watching late-

Reinforcement Schedules

22-

In most of our examples, the desired response has been reinforced every time it occurs. But reinforcement schedules vary. With continuous reinforcement, learning occurs rapidly, which makes this the best choice for mastering a behavior. But extinction also occurs rapidly. When reinforcement stops—

Real life rarely provides continuous reinforcement. Salespeople do not make a sale with every pitch. But they persist because their efforts are occasionally rewarded. This persistence is typical with partial (intermittent) reinforcement schedules, in which responses are sometimes reinforced, sometimes not. Learning is slower to appear, but resistance to extinction is greater than with continuous reinforcement. Imagine a pigeon that has learned to peck a key to obtain food. If you gradually phase out the food delivery until it occurs only rarely, in no predictable pattern, the pigeon may peck 150,000 times without a reward (Skinner, 1953). Slot machines reward gamblers in much the same way—

294

Lesson for parents: Partial reinforcement also works with children. Occasionally giving in to children’s tantrums for the sake of peace and quiet intermittently reinforces the tantrums. This is the very best procedure for making a behavior persist.

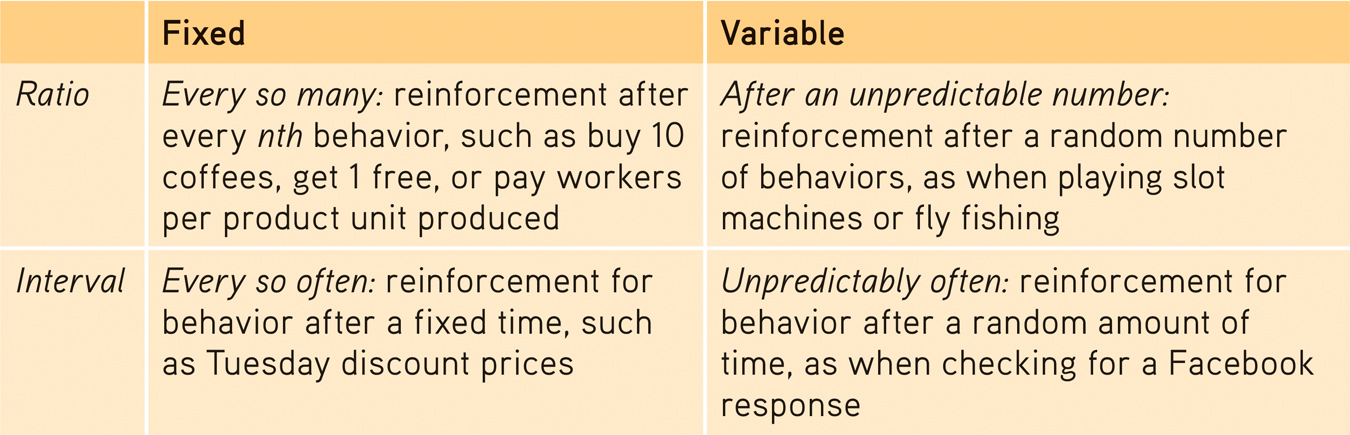

Skinner (1961) and his collaborators compared four schedules of partial reinforcement. Some are rigidly fixed, some unpredictably variable.

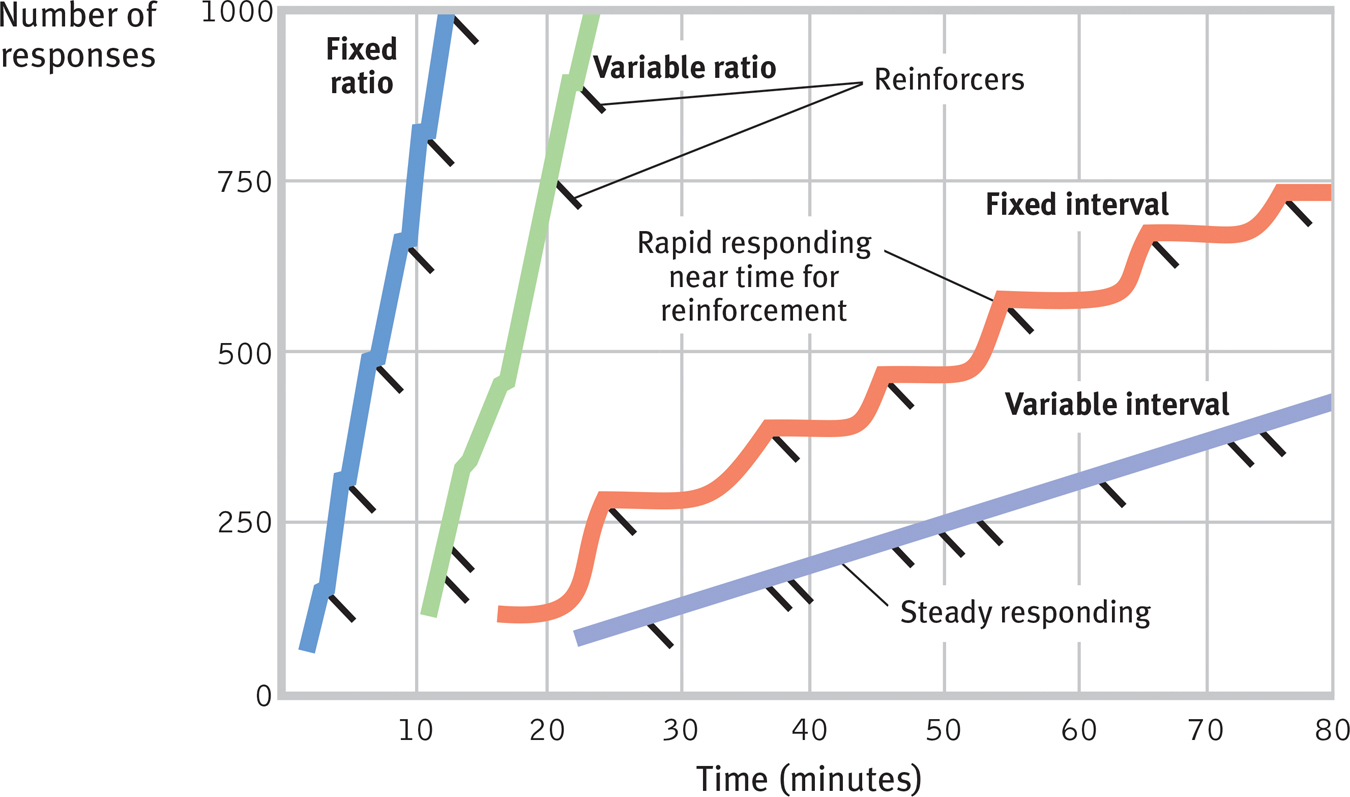

Fixed-ratio schedules reinforce behavior after a set number of responses. Coffee shops may reward us with a free drink after every 10 purchased. Once conditioned, rats may be reinforced on a fixed ratio of, say, one food pellet for every 30 responses. Once conditioned, animals will pause only briefly after a reinforcer before returning to a high rate of responding (FIGURE 22.3).

Figure 22.3

Figure 22.3Intermittent reinforcement schedules Skinner’s (1961) laboratory pigeons produced these response patterns to each of four reinforcement schedules. (Reinforcers are indicated by diagonal marks.) For people, as for pigeons, reinforcement linked to number of responses (a ratio schedule) produces a higher response rate than reinforcement linked to amount of time elapsed (an interval schedule). But the predictability of the reward also matters. An unpredictable (variable) schedule produces more consistent responding than does a predictable (fixed) schedule.

“The charm of fishing is that it is the pursuit of what is elusive but attainable, a perpetual series of occasions for hope.”

Scottish author John Buchan (1875–1940)

Variable-ratio schedules provide reinforcers after a seemingly unpredictable number of responses. This unpredictable reinforcement is what slot-

Fixed-interval schedules reinforce the first response after a fixed time period. Animals on this type of schedule tend to respond more frequently as the anticipated time for reward draws near. People check more frequently for the mail as the delivery time approaches. A hungry child jiggles the Jell-

Variable-interval schedules reinforce the first response after varying time intervals. Like the longed-

Table 22.2

Table 22.2Schedules of Reinforcement

In general, response rates are higher when reinforcement is linked to the number of responses (a ratio schedule) rather than to time (an interval schedule). But responding is more consistent when reinforcement is unpredictable (a variable schedule) than when it is predictable (a fixed schedule). Animal behaviors differ, yet Skinner (1956) contended that the reinforcement principles of operant conditioning are universal. It matters little, he said, what response, what reinforcer, or what species you use. The effect of a given reinforcement schedule is pretty much the same: “Pigeon, rat, monkey, which is which? It doesn’t matter…. Behavior shows astonishingly similar properties.”

295

RETRIEVAL PRACTICE

- Telemarketers are reinforced by which schedule? People checking the oven to see if the cookies are done are on which schedule? Airline frequent-flyer programs that offer a free flight after every 25,000 miles of travel are using which reinforcement schedule?

Telemarketers are reinforced on a variable-

Punishment

22-

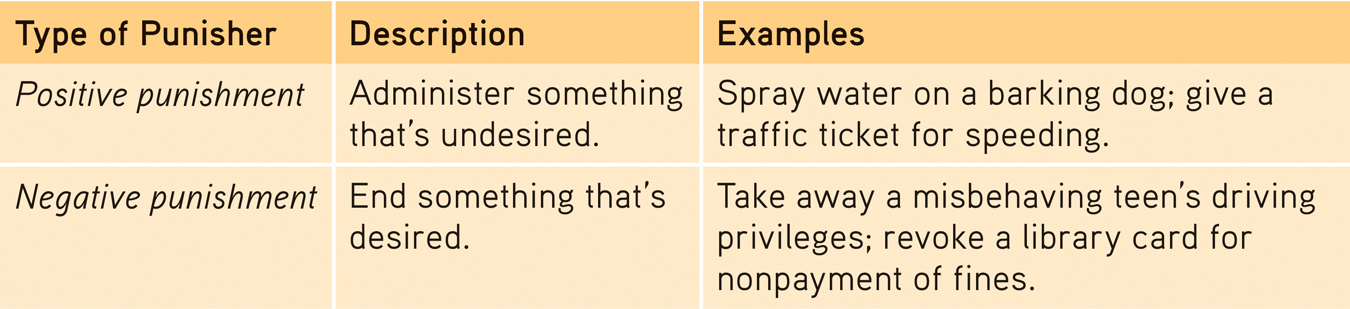

Reinforcement increases a behavior; punishment does the opposite. A punisher is any consequence that decreases the frequency of a preceding behavior (TABLE 22.3). Swift and sure punishers can powerfully restrain unwanted behavior. The rat that is shocked after touching a forbidden object and the child who is burned by touching a hot stove will learn not to repeat those behaviors. A dog that has learned to come running at the sound of an electric can opener will stop coming if its owner runs the machine to attract the dog and then banish it to the basement. Children’s compliance often increases after a reprimand and a “time out” punishment (Owen et al., 2012).

Table 22.3

Table 22.3Ways to Decrease Behavior

Criminal behavior, much of it impulsive, is also influenced more by swift and sure punishers than by the threat of severe sentences (Darley & Alter, 2012). Thus, when Arizona introduced an exceptionally harsh sentence for first-

296

How should we interpret the punishment studies in relation to parenting practices? Many psychologists and supporters of nonviolent parenting note four major drawbacks of physical punishment (Gershoff, 2002; Marshall, 2002).

- Punished behavior is suppressed, not forgotten. This temporary state may (negatively) reinforce parents’ punishing behavior. The child swears, the parent swats, the parent hears no more swearing and feels the punishment successfully stopped the behavior. No wonder spanking is a hit with so many U.S. parents of 3- and 4-year-olds—more than 9 in 10 of whom acknowledged spanking their children (Kazdin & Benjet, 2003).

- Punishment teaches discrimination among situations. In operant conditioning, discrimination occurs when an organism learns that certain responses, but not others, will be reinforced. Did the punishment effectively end the child’s swearing? Or did the child simply learn that while it’s not okay to swear around the house, it’s okay to swear elsewhere?

- Punishment can teach fear. In operant conditioning, generalization occurs when an organism’s response to similar stimuli is also reinforced. A punished child may associate fear not only with the undesirable behavior but also with the person who delivered the punishment or where it occurred. Thus, children may learn to fear a punishing teacher and try to avoid school, or may become more anxious (Gershoff et al., 2010). For such reasons, most European countries and most U.S. states now ban hitting children in schools and child-care institutions (stophitting.com). Thirty-three countries, including those in Scandinavia, further outlaw hitting by parents, providing children the same legal protection given to spouses.

- Physical punishment may increase aggression by modeling aggression as a way to cope with problems. Studies find that spanked children are at increased risk for aggression (MacKenzie et al., 2013). We know, for example, that many aggressive delinquents and abusive parents come from abusive families (Straus & Gelles, 1980; Straus et al., 1997).

Some researchers note a problem. Well, yes, they say, physically punished children may be more aggressive, for the same reason that people who have undergone psychotherapy are more likely to suffer depression—

If one adjusts for preexisting antisocial behavior, then an occasional single swat or two to misbehaving 2-

- The swat is used only as a backup when milder disciplinary tactics, such as a timeout (removing children from reinforcing surroundings) fail.

- The swat is combined with a generous dose of reasoning and reinforcing.

Other researchers remain unconvinced. After controlling for prior misbehavior, they report that more frequent spankings of young children predict future aggressiveness (Grogan-

Parents of delinquent youths are often unaware of how to achieve desirable behaviors without screaming, hitting, or threatening their children with punishment (Patterson et al., 1982). Training programs can help transform dire threats (“You clean up your room this minute or no dinner!”) into positive incentives (“You’re welcome at the dinner table after you get your room cleaned up”). Stop and think about it. Aren’t many threats of punishment just as forceful, and perhaps more effective, when rephrased positively? Thus, “If you don’t get your homework done, there’ll be no car” would better be phrased as ….

297

In classrooms, too, teachers can give feedback on papers by saying, “No, but try this …” and “Yes, that’s it!” Such responses reduce unwanted behavior while reinforcing more desirable alternatives. Remember: Punishment tells you what not to do; reinforcement tells you what to do. Thus, punishment trains a particular sort of morality—

What punishment often teaches, said Skinner, is how to avoid it. Most psychologists now favor an emphasis on reinforcement: Notice people doing something right and affirm them for it.

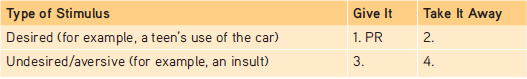

RETRIEVAL PRACTICE

- Fill in the three blanks below with one of the following terms: positive reinforcement (PR), negative reinforcement (NR), positive punishment (PP), and negative punishment (NP). We have provided the first answer (PR) for you.

1. PR (positive reinforcement); 2. NP (negative punishment); 3. PP (positive punishment); 4. NR (negative reinforcement)